Chapter 13: Understanding and Improving the Usefulness of Conceptual Systems—An IPA Based Perspective on Levels of Structure and Emergence

Wallis, S. E. (2020). Understanding and improving the usefulness of conceptual systems: An Integrative Propositional Analysis-based perspective on levels of structure and emergence Systems Research and Behavioral Science, in press. https://onlinelibrary.wiley.com/doi/abs/10.1002/sres.2680

Appreciations

My thanks to Michal Lissack and the ISCE conference in Paris 2013 for deep conversations resulting in new insights that led to this paper. Also to Thomas Marzolf, Kent Palmer, and Len Troncale whose conversations and insights proved inspiring and illuminating. Finally, to two anonymous reviewers whose comments, both kind and challenging, led to the development of an improved paper.

Abstract

Terms like “levels” and “nested” are used to describe relationships between components of conceptual systems (theories, models, etc.). However, they have not been fully explored. This paper investigates levels to better understand how theories are structured; and so, how we may develop more useful theories and models to better support more effective practice. We find a horizontal dimension (represented by causal connections between concepts at one ontological level) and a vertical dimension (represented by connections of emergence between concepts of differing ontological levels). This view of emergence offers a new way to structurally distinguish between conceptual components of a theory, thus supporting a new approach to building theories that better reflects our systemic world. A third, perspectival, approach may be applied to aid in the understanding of both dimensions. A typology is proposed as are conventions for diagramming theories, and new criteria for improving the structure of theories.

Introduction

It is generally accepted that organizations may be seen as having different levels (often presented hierarchically). For example, Lindberg (2004) talks about levels in human/organizational systems as individual, interpersonal, organizational, and community. While those may easily be interpreted as existing on different levels (in some sense) they might also be interpreted as having different numbers of people and interactions—things that are common across all seeming levels. So, despite (and/or because of) interesting arguments and conversations around borders and boundaries between those levels (cf. Midgley & Rajagopalan, 2019) it seems that they are quite permeable because people and interactions may also cross those boundaries. Such complexity enables diverse views without necessarily improving understanding; instead requiring detailed descriptions of varied views with attendant confusion and fragmentation that may accompany such detailed description. Instead, we seek here to understand levels in a way that enables the greatest variety of useful explanatory models requiring the fewest “rules.”

Notions such as levels are also commonly found in the systems sciences. First, that systems have different levels (Ashmos, Huonker, & Reuben R. McDaniel, 1998; Pascale, 1999; Tower, 2002). Also, it seems that differences in levels seem to emerge from changes or interactions in (and between) systems (Axelrod & Cohen, 2000; Harder, Robertson, & Woodward, 2004; Moss, 2001; Pascale, 1999). While, in turn, having different levels causes interaction and change (Hunt & Ropo, 2003; Stacey, 1996).

But what constitutes a level? We may casually talk about levels in terms of “how many” people may be involved. For example, a “micro” level might include a couple of dozen people while a “macro” level might include billions (Alexander, Giesen, Munch, Anderson, & Smelser, 1987). Are those “levels” or are they simply numbers on a scale? That fuzziness of understanding (or, perhaps, they may be examples of false clarity) applies also to conceptual systems (theories and models). Thus we may be led to choose poor models and so make poor decisions. How might we gain more clarity about what (or how) one level is understood as emerging from another?

Emergence, as a term, was coined by Lewes in 1875 based on the Latin emergo, to arise or to come forth (Vintiadis, 2013). Clayton (2006) provides a nice and concise history of emergence from Aristotle through Hegel, the British Emergentists, and to the near present with twists, turns, and reversals in the way emergence has been understood over the centuries in various disciplines including the mathematical physics of Whitehead, James in psychology, and Morgan in evolutionary biology. Conversations continued in recent decades around what these concepts mean and how they may be applied with authors such as Polanyi (chemistry and biology) and Sperry (neuroscience) and many others with various definitions proposed for a variety of concepts including: strong emergence, weak emergence, causality, downward causation, irreducibility of emergence, properties that may be seen as emerging, epistemology, and ontology.

Among those many perspectives, it is worth noting that “weak emergence is sometimes called “epistemological emergence,” in contrast to strong or “ontological emergence” (Clayton, 2006: 8), a perspective supported in the present paper. Also linked with those ideas, Morgan suggests that causality between components occurs on a horizontal level; while, in contrast, there are discontinuities between levels of emergence. Those emergent levels of reality are different from one another in substantial ways (ibid: 12). Morgan’s lays the foundation for the present piece which builds on his work by providing a way to measure those levels as structures of conceptual systems and so support more scientific understanding of levels.

Within emergentism, the notion of causal closure seems particularly relevant for a variety of philosophical debates including the mind-body problem. Some suggest that all physical events are caused by and result from other physical events (causally closed), (cf. Kim, 1993) reductively arguing, for example, that the mental domain is reducible to the physical domain. Others suggest that physical events may be caused by (and/or result from) mental events (Lowe, 2000) and, building on evolutionary theory and complexity perspectives, see such things as meaning, language, and social norms as existing on an apparently different level from the physical (cf. Watanabe & Smuts, 2004). Sparacio (2003) argues that the mental is a higher level, emergent feature, of the physical because it has different properties and so has a different ontological status. Similarly, (Gabbani, 2013) provides an excellent epistemological critique of Kim’s causal closure.

Philosophical conjectures, conversations, and concerns continue to this day around issues such as emergence, causal closure, and the mind-body problem. Despite extensive philosophical investigation into the emergentic notion of causal closure, few papers even attempt to provide an effective definition of it; to say nothing of a way to measure it. This is a critical gap that must be addressed if we are to make progress.

Having developed methods for measuring the structure of theories on one level (concepts connected by causal relationships) our research suggests that it may be possible to develop measures of the structure of theory on other levels and so move emergentism a step closer to becoming a science rather than the increasingly fragmented confusion of philosophical expositions.

Wallis (2014a) suggests that we may improve our understanding of theory, and how we may create more useful theory, by understanding where those theories fit on some scale of abstraction and finding how, “theories at one level of scale (e.g., apples) are somehow nested within theories of another scale (e.g., fruit). The connection between levels, then, might be understood as an emergent property” (p. 196). However, that view seems to suggest that there is only one scale from the highly abstract down to the very concrete. While, in contrast, from some hypothetical “satellite-view” we limited humans seem to see or experience and react to levels and such differences (even when we create them ourselves). So, there may be some difficulty in effectively identifying what those levels (and their implied boundaries) are. For example, in much the same way that a CEO may knock on the janitor’s door (and visa-versa), levels may seem permeable, insubstantial, or subject to changes in perspective. Nested dolls seem invisible until they are unpacked; at which time they become clear.

Terms such as levels and nested are also applied to conceptual systems and /or blends of physical and conceptual systems. For example, “More recent developments assert that knowledge acquisition is a multilevel nested process” (from Zhao & Anand, 2009) cited in (Tian, Li, & Wei, 2013). And (to the extent that embeddedness might be understood as a kind of level) "Evaluators can thus embed theories within one another in the same way that systems are embedded within each other" (Westhorp, 2012: 411).

While neither of those statements may be positively said to be inaccurate, they do seem to leave out a critical consideration. Specifically, how might we know if we are nesting, embedding, or leveling theories in the best possible way? How do we know that our theories have the same number of levels as our reality?

The implications for the social/behavioral sciences are profound. If we can develop a rigorous, reasonably objective, framework for understanding and creating better theories where the levels of our theories better match the levels of our larger world and policies, that new perspective may serve to accelerate the advance of knowledge, science, and practice in a world that desperately awaits effective answers to wicked problems.

This is important because theories that are merely said to have a useful number of levels might be arranged improperly and so provide false or misleading insights. It is one thing to say that one has all the parts needed for a system to work effectively, but something very different to say how those parts are connected for the system to work effectively. More generally, “There are credible and rather urgent reasons to believe that we must manage and mobilize our knowledge more effectively” (Wexler, 2001: 262) where theories may be understood as a kind of formalized or explicit knowledge. By understanding levels, we may learn how to determine if our conceptual models are complete; and so, stop working on them and start using them to the maximum of their potential benefit (Gonzales, 2001).

Within the present paper, one goal is to better understand “how connected” levels of theories are; and, by providing some measurement of those relationships, provide a clearer path for creating more useful theories for practical application. Another goal is to do that in a way that is more accessible to a wider range of scholars, practitioners, and students for evaluating and improving theories.

This exploration is of particular importance when our field is striving to create theories which are effective representations of systems in the physical world. Because, if physical world systems have levels, those should be reflected in the theory as well. Otherwise, the conceptual system provides only a poor representation of the physical system and becomes relatively useless or misleading. Thus, we may better understand our conceptual systems and so better understand our physical systems (Westhorp, 2012). In contrast, “single level models are likely to overlook relevant interactions” (Bachmann, Engelen, & Schwens, 2016: 310).

This is important because, according to Wexler (2001), knowledge maps, when created and used appropriately, support organizational success and provide a competitive advantage providing economic returns (higher income), structural returns (improved organizational processes, learning, communication, and knowledge), cultural returns (including trust, socialization of new organizational members, shared values, ability to predict and adapt to change), and knowledge returns (improves organizational memory, identifies where research is needed, clarifies distribution of accountability and rewards, shared vocabulary and communication, learning for planning).

The importance of this topic is also reflected in a recent special issue of Chaos (2016), Vol. 26, titled, “Complex Dynamics in Networks, Multilayered Structures and Systems.” That issue, however is for the more mathematically inclined. The present paper, in contrast, will be focused on the relational or qualitative aspects of levels, specifically within conceptual systems while drawing on examples from a variety of disciplines.

This investigation has implications for interdisciplinary studies as well because we might see each discipline as on a separate level from the others. Or, perhaps, some disciplines (certainly sub-disciplines) might be viewed as nested within one another. By better understanding those relationships, we may expect to improve the ability of theories to address complex problems requiring multidisciplinary solutions.

If we live in a world where our systems have levels, that world would best be understood and engaged if our conceptual systems also had levels. However, our understanding of those levels seems to be lacking in rigor with the attendant likelihood for confusion. So, in this paper, we seed to discover new insights into the logical systemic structure of conceptual systems with a focus on levels found in theories, plans, and models. With new insights, we anticipate the ability to develop theories that will be more effective in practical application for reaching specified goals.

After providing some definitions, we will explore the notion of levels from a number of different perspectives. Following those, we will provide suggestions for building better theories (more useful in practical application for reaching desired goals).

Definitions

A system may be defined as “A set of elements in interaction (von Bertalanffy 1968)” which “...can be either physical or conceptual, or a combination of both.” https://sebokwiki.org/wiki/System_(glossary)

This structural similarity between physical and conceptual systems allows us to draw close parallels between them. While one might argue that there are many other ways to categorize systems (e.g., psycho-social, biological) for the present paper, for ease of representation and comprehension, we will use the term “social systems” or “physical systems” (including social systems) to represent all non-conceptual systems (with the understanding that there are still many overlaps and intertwining relationships within systems to be worked out later).

“Generally, a conceptual system is any form of theory, theory of change, theory of the middle range, grand theory, model, mental model, policy, etc.” (Wallis, 2016a: 579). A theory may be understood as “An ordered set of assertions” (Weick, 1989: 517) such as a set of sentences, propositions, or hypotheses. Within each proposition may be found conceptual elements (typically nouns) and interactions (typically verbs).

Here, for the sake of clarity, we will present most theories abstractly as diagrams or (more simply) “maps” as is sometimes done for mind maps, concept maps, knowledge maps, maps, diagrams, causal loop diagrams, practical maps, logic models, program models, semantic networks, and so on.

The components of those conceptual systems will be generally referred to as concepts or variables while the connections between concepts will be generally referred to as causal relationships or connections (represented on diagrams as arrows).

In the next section, we will explore a variety of ways that differences in levels have been presented as an orienting background before developing in to emergence in greater depth.

Similarities and Differences

The term “level” has been applied to a variety of systems in a variety of ways. While a comprehensive review of all perspectives is beyond the scope of the present paper (a recent search in Google Scholar™ found over 800 articles mentioning “levels” published in Systems Research and Behavioral Science, alone) the present section will provide a range of orienting insights.

Whether we are talking about conceptual systems, physical systems, or a combination of the two, one difficulty is understanding how different parts or levels are related. Sometimes, those relationships seem quite close as in two people standing together, linked by a common language and common interests; they talk and learn and have the possibility of collaborative action. Other times, those relationships seem more distant as in a boiler providing heat within the basement of a high-rise, separated by hundreds of floors from a cell phone antenna on the roof providing telecommunication. Those differences (perhaps “making a difference”) is what we are investigating here.

In identifying fundamental systems processes, Friendshuh and Troncale (2012) identified “hierarchy” as an important isomorphy. Within that general term, they include “levels” and “subsystems” and the idea that one system may be “subsumed” by another.

Fractals also seem to exist with self-similar or self-same structure at different levels of scale (Mandelbrot, 1977). Also, Bateson’s “dual description” may be thought of as two levels of understanding, combined to create a third. However, drawing on Salthe, McKelvey (2004) suggests that dual descriptions are not sufficient, that three levels of understanding are a minimum.

As an example, Ward (2019) talks about using multiple models—“model pluralism”—to understand complex situations with theories that are at different levels in an effort to counter an apparent trend toward simplistic or “single factor” theories (Ward, 2014). Those levels, however, correspond with levels of analysis including (for understanding psychological depression) physiology, behavior, neurological, psychological, and phenomenological (Ward & Clack, 2019). So, the conceptual system is simply a reflection of the physical system—or more accurately, of the assumptions about the physical system—and so subject to the same potential failings of those assumptions.

Higgins and Shirley (2000) discuss theories as existing on different levels as micro-range, middle-range, grand, and meta level theory. Each with a distinct scope and place on a scale of abstraction. Ward and Hudson (1998) also talk about three levels for theories. However, their understanding of levels seems to correspond with the complexity or simplicity of theories. That is, on the first level, theories describe a situation, on another the theories analyze one factor, and on the third level theories include multiple factors. Research may be used to synthesize theories at lower levels and move them up to higher levels; particularly “theory knitting” (Kalmar & Sternberg, 1988). Leeuw and Donaldson (2015) also suggest theory knitting as a way to bridge differing levels of theory (such as a theory about a policy and a theory about how a policy is made).

While the above perspectives may be useful in some ways, a problem arises when applied to theories at differing levels of abstraction. For a simple example, one theory might address “apples” while another theory addresses “fruit.” While there appears to be an overlap between apples and fruit (suggesting the opportunity to knit together two theories containing those two concepts), those concepts differ on a scale of abstraction so should not be located within the same theory lest confusion arise (Wallis, 2014a). For example, it would be awkward to say something like “having more apples causes us to have more fruit” because apples are a kind of fruit; thus suggesting a tautological aspect to the statement. Similarly, because there is an ontic overlap between apples and fruit, they should not be contained in the same theory due to the potential confusion of having a theory with non-orthogonal elements (Wallis, 2020d).

Recognizing that kind of difficulty, in an introduction to a special issue on theory building, Klein, Tosi, and Albert A. Cannella (1999) write about some challenges to building theories including the confusingly large number of available theories, the researcher’s skill or comfort at moving between levels of scale, the complexity of larger theories, and the difficulty of finding an appropriate publication. However, their understanding of levels is also related to the systems under analysis (micro, meso, and macro) rather than some levels within the theories themselves. That view is similar to many who are interested in synthesizing theories relating to different levels of physical systems.

The study of systems dynamics (SD) uses a variety of measures to evaluate which part of the theory is most influential on the behavior of the system represented by the theory. SD is used to model and study networks of multiple loops. Those “SD models are conceived as closed systems” (Schwaninger, 2011: 8979). However, SD has its share of limitations (including) that the results of those measures are not always intuitively clear and those measure might be difficult to determine in a highly complex model with many feedback loops (Hayward & Roach, 2017). Indeed, the very practice of simplifying the analytical process by focusing on one part of the theory (explained as “nested” in the theory as a whole), may be seen as limiting an SD understanding of the model (Saleh, Oliva, Kampmann, & Davidsen, 2010).

Interestingly, SD looks at the “distance” between nodes as an indicator of their similarity or difference or to show the degree of influence one node has upon another (McGlashan, Johnstone, Creighton, de la Haye, & Allender, 2016). That distance might be thought of as one way to measure the levels of difference between concepts.

It has been noted that most “semiotic networks [as representations of knowledge] have a hierarchical structure, all self-organizing semantic networks actually consist of a number of smaller self-organizing semantic networks across different scales” (Klenk, Binnig, & Schmidt, 2000: 152). However, it is not clear exactly what those levels are, or how they emerge.

Nested

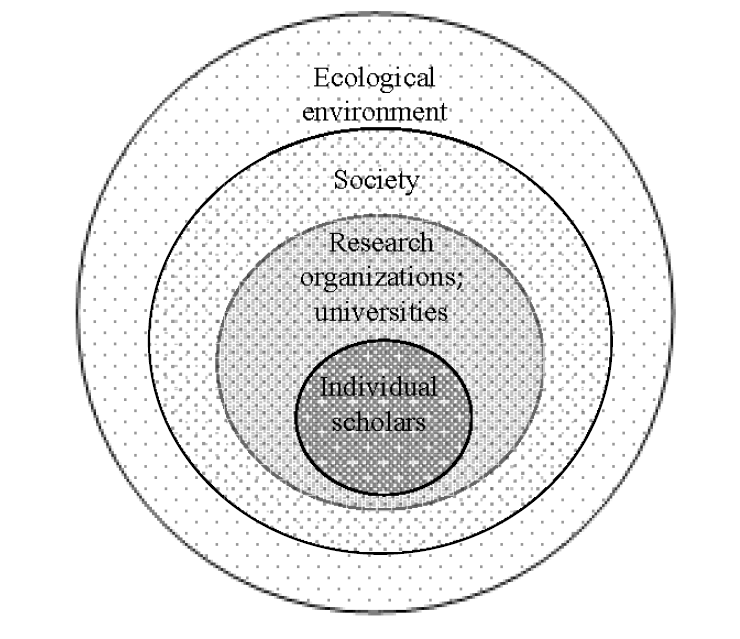

Another, closely related, view of levels is found in the idea that some systems may be understood as “nested” within other systems. For example, a program model (also known as a program theory) may be nested (in some sense) within the larger program or policy. Within a theory, complex concepts may be said to have a number of sub-concepts (Wallis, 2020d) which may be thought of as a nested relationship. Social network analysis (Hawe, Webster, & Shiell, 2004; Neal & Neal, 2013) may be understood as looking at nested and/or networked relationships at multiple levels. Within a story (fictional, or not, or somewhere in-between) sub-plots may be nested within the main story. Emotions may be nested within our bodies and minds. Figure 1 provides one diagrammatic example; there, (Fink, 2019) shows individual scholars nested within research organization, nested in society, nested in the ecological environment.

Figure 1 Scholars nested within universities, nested within society, nested within ecological environment (from Fink 2019)

While this kind of nesting relationship serves to illustrate some relationships, it also (as with any presentation) de-emphasizes or ignores other relationships because each level is connected, in some way, to other levels. While it is not represented by Figure 1, individual scholars, for an example, are intimately connected with the ecological environment through processes such as living, breathing, and eating.

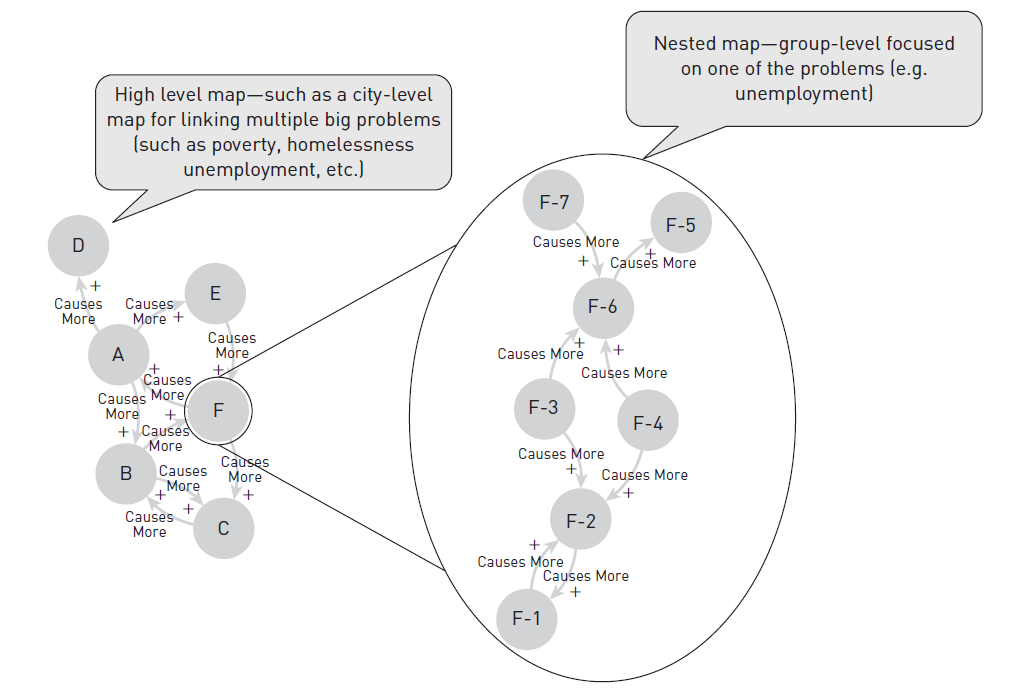

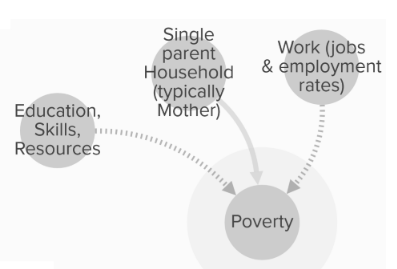

Like individual people are nested within teams, teams within departments, and departments within organizations, we can also think of knowledge maps (as a kind of conceptual system or theory) as nested within other knowledge maps. For example, a number of stakeholder groups within a city might collaborate to create a knowledge map showing the relationship between major problems they face (e.g., poverty, unemployment, homelessness). While that high-level map might show some linkages between those issues, such a map might not be very useful for a team focused on feeding people on the street. So, each team might create a map that is more relevant to their own level of operation. Such an operational map could be seen as nested within (or at a different level from) the higher-level map as seen in Figure 2.

Figure 2 Nested map within a higher level map—from “Practical Mapping for Applied Research and Program Evaluation” (Wright & Wallis, 2019: 222)

While these may be seen as nested, they may also be seen as existing at different levels of some organization. So, as noted above, the boundaries are quite permeable or indeterminate, having been created by intuition or biased observation. Therefore, some clarity is needed.

The overlap, or interplay, between physical and conceptual systems threatens to confound us. For example, Ohm’s law of electricity (a kind of theory) may be nested within a team of engineers who are hierarchically working under the direction of a person who uses Situational Leadership (a kind of theory) to manage her team, while they are all part of a larger firm within an industry that is partially controlled by an informal group of elites who are using economic theories to advance their collective interests within the broader global bio/socio-economic system.

The general idea of recognizing different systems as existing on different levels or as nested within one another seems to make sense if those systems are disconnected from one another. That view, however, negates the most important assumption of systems thinking. That a boundary cannot be a point, a line, or a plane in three dimensional space, but a tetrahedron which identifies an area of space that may be seen as separate from another (McNamara, 2009). Therefore, we must develop a more nuanced understanding of how our conceptual systems are connected with one another, as well as the connections within each system. Not in a simple, reductionist way; rather in a complex systemic way.

Tentative typology

The above collection of perspectives may seem somewhat kaleidoscopic in the many points and counterpoints provided. Some relationships seem fuzzy while others seem to exhibit a gap which may be represented as a lack of understanding. Other perspectives seem to suggest that levels may be seen as categories of things or that some boundary may exist between the levels. Another relevant perspective is that there may exist some kind of change or transformation that occurs between levels. And, finally, the question may be raised how we can understand complex issues using relatively compact theories; perhaps where complex concepts are enfolded with one another.

To clarify the many perspectives, the following sub-sections suggest a tentative typology for levels; each type building on the one before to build our understanding of levels in conceptual systems. While the following is neither comprehensive nor conclusive, we hope it will encourage conversations that will move the field toward greater clarity. More to the flow of the present paper, these types will serve to focus our attention on key perspectives to be developed in the following full section on emergence.

Type 1—“Fuzzy”

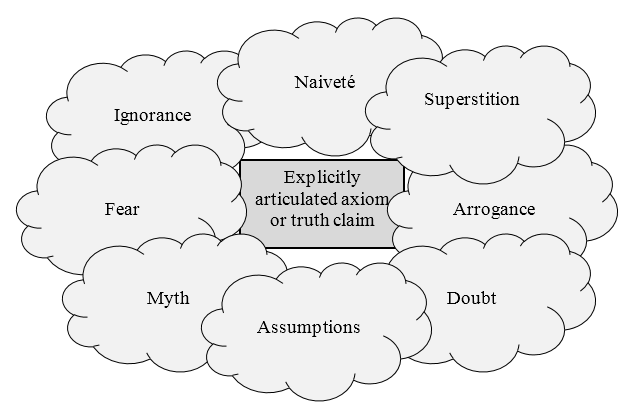

On the simplest level, we may see a difference in levels between that which is known and that which is unknown; or, partially known or inferred. This may be understood as the difference between our explicit and tacit beliefs as shown in Figure 3.

Figure 3 Box of explicit belief surrounded by cloud of fuzzy tacit beliefs.

Another way to understand a Type 1 conceptual system is as something where one or more concepts have some ontological expression, but are not expressed in a way that identifies epistemological relationship(s) with other concepts. That lack of connection draws perilously close to negating assumptions of systems thinking in that such conceptual systems are presented as non-systemic; that is to say, each concept is not clearly connected to any other. In contrast, a systemic perspective suggests that the existence of one concept implies the search for others.

Type 2—“Gap”

A conceptual system with two (or more) concepts, where no connections have been explicitly identified (or, at least, claimed as one might do in developing research hypotheses), the next type is where a gap between them has been identified.

Based on the assumption that we live in a systemic universe where things are connected, Type 2 conceptual systems suggest a research process where we can use a kind of gap analysis (Wright & Wallis, 2019: 162) to indicate which direction to take with our research to better surface those unknowns. Using a practical map of circles (one for each concept) and arrows (for causal connections between the circles), we take our investigatory cue from those gaps (any space between circles that does not have an arrow) as an opportunity to conduct research. In short, if one person claims, for example, “A is true and B is true,” another might reasonably ask (or investigate) the potential causal relationship between A and B because a gap exists between A and B.

Type 3—“Categories” or “Implied Connections”

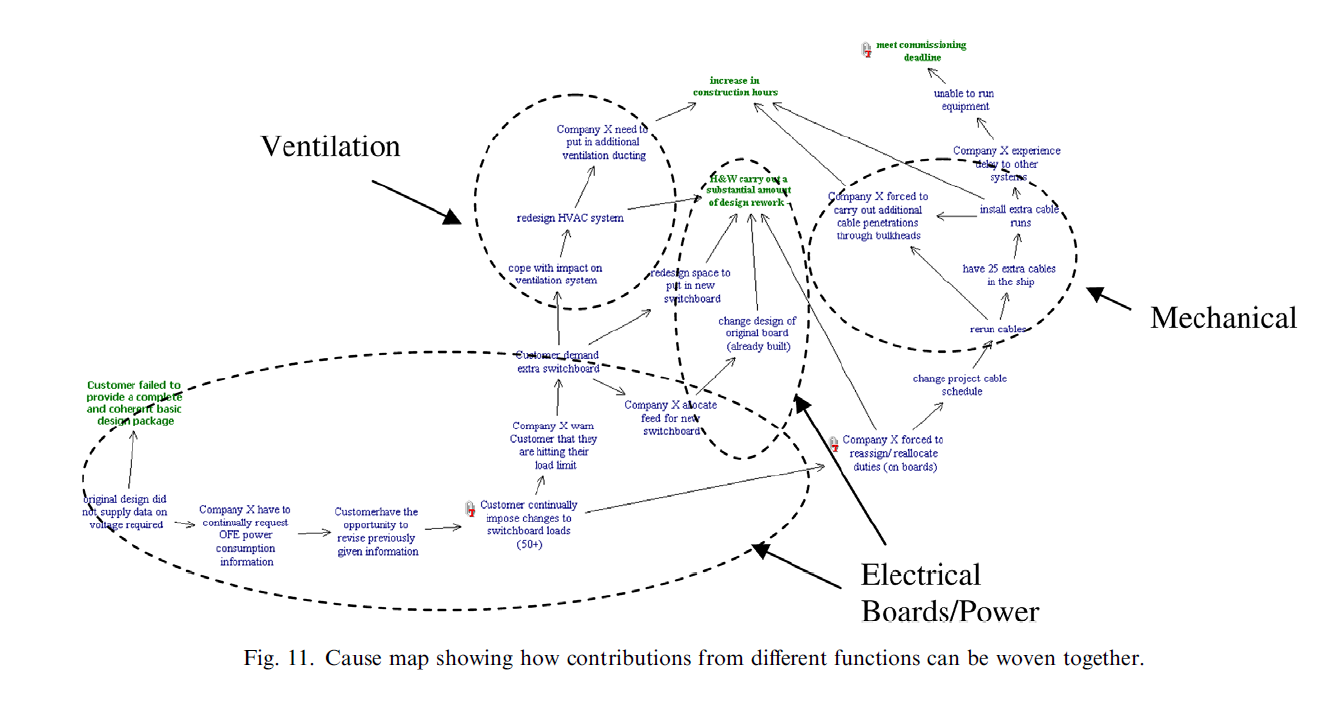

In a categorical view, some concepts within a theory are identified as being categorically similar or somehow related as in Figure 4. Regardless of the connections between concepts that are or are not expressed, Type 3 conceptual system carries a clear implication that there are some connections without necessarily explaining what kinds of connections they are.

Figure 4 Levels as may be seen in clumping (Howick, Eden, Ackermann, & Williams, 2008: 1079)

In Figure 4, much of the text is not visible due to space limitations. The point of the figure is to show that there are categories, or clumps (there, expressed as “Ventilation,” Mechanical,” “Electrical,” and “Boards/Power) implying that there are additional connections between the concepts contained within each of those categories.

Again, there is an implied call for research. This time, because the connections (and/or overlaps between the concepts) are more clearly implied so the implications for research are more explicit than in Type 2. Type 3, much as Type 1, provides some improved understanding. However, it is worth noting that categorization may be seen mainly as a kind of indicator for relevance. That is, showing what categories or concepts may seem relevant to a particular person or stakeholder group in a particular situation. For example, managers may be interested in a category of theory called “management theory.” Such an approach is not highly effective, however, because it does nothing to tell us which management theory might be best or most useful.

Type 4—“Bounding”

For physical systems, each person’s world may be said to be bounded by what they understand and what they interact with so that boundaries are erected for the convenience of the observer / analyzer (Hutchins, 1995). That, however, is “other-bounding” while here, instead, we are looking at “self-bounding.”

Metaphorically, we may imagine a village as a grouping of building whose inhabitants interact more frequently with one another than with inhabitants of any other village. Where that village is situated on an island, it might be tempting to say that the boundary of the village is the water which surrounds it. That would certainly provide a convenient demarcation. Instead, however, the perspective presented here suggests that the boundary of the city is better defined by the close relationship of the buildings and systemic interaction of its people.

Any time a set of concepts and their connections are described as a theory or model, a “line” has been drawn around them describing or delineating that as a conceptual system. We can think of a theory as existing within its own boundaries (Wallis, 2010); as being self-bounded or as a self-defined conceptual system. The boundary of such a conceptual system is made of the concepts contained/described within it.

In Figure 4, the entire diagram is presented as a complete theory. The boundary for that theory would be identified as the concepts contained within it. It would be a misuse of that theory, therefore, to apply it (except, perhaps, experimentally) to situations that do not include one or more of those concepts.

That is to say, a theory (or other conceptual system) should not be applied to understand and/or engage a physical system where the concepts of the theory are not reflected in the physical system; a theory of electronics should not be applied to situations where no electronics exist.

Type 5—“Transformative”

No part of a conceptual system is completely cut off from its surrounding systems because a system may be in some ways open and closed at the same time. For example, systems may be open to information but closed to matter (Roth, 2019). A simplistic view might suggest that my liver is closed to the open air. However, my liver still receives oxygen via my red blood cells after they have become oxygenated—after a transformation of those cells has occurred.

“The difference in stability of conceptual systems may be understood in terms of systemic structure which may be measured using Integrative Propositional Analysis (IPA), (Wallis, 2016a). Those with higher levels of structure are more open to data but less open to changes in conceptual components. Those with lower levels of structure are more closed to data, but more open to changes in conceptual components” (Wallis, 2020a).

A relatively straightforward way to evaluate levels may be seen in differentiating between linear, non-transformative, concepts. However, such representations would only be found in relatively immature theories. A more useful and sophisticated measure of difference would be between transformative changes. That is, for conceptual systems, we may understand theories as having different levels where the path of causality is interrupted by transformation.

For example, returning again to Figure 2 showing one map nested within another, the causal path from A to E is direct, with no transformation. While, in contrast, the causal path from F-7 to F-5 must pass through F-6 which transforms the nature of F-7. Naturally, from a systems perspective, we would look with suspicion on claims that more A causes more E in a linear fashion. However, it serves as an adequate example of causal distance.

Alternatively, we might look at that same figure and see the “simple” concepts or non-transformative concepts (A, D, E, F-1, F-3, F-4, F-5, F-7) as existing on one level of understanding and the transformative concepts (B, C, F, F-2, F-6) as existing on another.

So, another type of boundary is seen where each concept within a theory is structurally defined by the other concepts. For example, in Ohm’s Law, the measure of volts is defined by the measures of amps and ohms; each is transformative (Figure 6) from the other two.

Type 6—“Unfolding/Enfolding”

Another approach suggests a kind of enfolding in the structure of theories. Enfolding may be seen as similar to developing “subroutines.” Rozin (1976), for example, suggests that as children learn they are also organizing their knowledge into subroutines which makes mental tasks more efficient. Unfolding may be understood as a more rigorous/formal version of “unpacking.” For example, using a simple phrase such as “I wrote the document on the cloud” refers to the use of personal memory, cognition, and typing skills along with a vast array of technological systems including computers, telecommunication equipment, and servers (note, each of those things/processes mentioned may also be unpacked).

Here, however, we look at folding and unfolding in a more formal/rigorous way. For example, in the structure: A → B ← C, we might see B as the enfolded concept while A and C are the unfolded concepts. Importantly, that is a “transformative” structure (Wallis, 2016b). Indicating that the combination of A and C have resulted in something new.

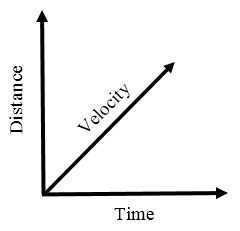

For a more concrete example from physics, velocity is equal to the distance travelled over a length of time (V=Δd/Δt). To lessen the cognitive load, one might simply ask, “How fast are you going?” instead of asking “what is your change in distance divided by your change in time?” Answers such as “I’m doing sixty” are likely to be clearly understood. The concepts of time and space are enfolded into the concept of velocity.

Figure 5 Velocity, Time, and Distance as folds of one another

For a more complex example, consider one of Einstein’s ideas. According to (Okun, 1989) for a body at rest: E2 - p2c2 = mc2

Where E = energy, c = the speed of light, m = mass, and p = momentum. That momentum has a formula of its own, expressed as: p = v · E/c2

We might see the second formula (for momentum) as nested in the first. We may also see “p” as the enfolded version of v · E/c2. It gives pause to imagine how many things might be enfolded in our assumptions; just waiting to be unfolded so that we may develop a more nuanced and useful understanding of them.

By understanding how concepts may be enfolded and unfolded, we may develop theories that are parsimoniously easier to use while still retaining the requisite variety needed for dealing with complex situations.

Levels of Emergence

While the above section and sub-sections provide an orienting backdrop on a number of ways we might interpret levels, this section takes a much deeper dive into how we may understand different levels based on the idea of emergence.

Briefly, according to Page, there are three kinds of emergence. Simple emergence is obtained by combining parts in an orderly way to reach a predictable outcome. Weak emergence is less predictable than the simple, while strong emergence describes unexpected results that are completely unpredictable (https://sebokwiki.org/wiki/Emergence).

This view dovetails neatly with Sillitto et al. (2017) who suggest that a system may be defined as “A collection of possibly interacting, related components that exhibits emergence” (p. 13).

It is reasonable to infer that when we see more emergence occurring, we can be increasingly certain that we have a better understanding of some system. Although, on a side note, we should specifically disregard general claims such as “everything is emerging all the time” because that does not provide a good/useful explanation of what is emerging, or from what the emergence is occurring, or where we might explore to generate greater understanding.

When viewing a conceptual system, simple emergence may be seen in a concatenated logic structure as in Figure 2. Where concept F-6 (as one example) is transformative—concatenated from F-7, F-3, and F-4. In that theory, F-6 is a predictable outcome. For a simple example, in a factory setting, having more workers, more raw material, and more tools will cause the production of end-product widgets.

While such a process seems reasonably clear, understanding unanticipated outcomes is not. Because, it seems, there are two reasons for such outcomes. First, because a theory is structurally weak; the second because it is structurally strong. A structurally weak theory may have too few concepts (and and/or too few causal connections between them) to adequately represent the relevant situation in the physical world. Using a simple theory to understand a complex situation will lead to frequent surprises.

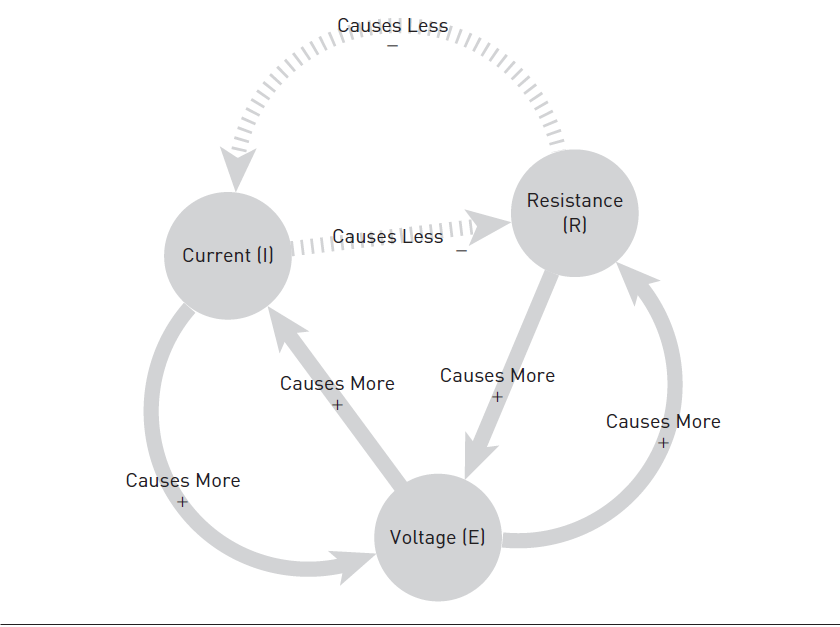

On the other end of the structural scale are highly systemic theories; the best of which have all of their component concepts included in loops (Wallis, 2019) and all of their concepts are transformative—concatenated from other concepts (Wallis, 2016a) such as Ohm’s Law (I=E/R). Using Ohm’s Law as a theory (represented diagrammatically in Figure 6), it may be seen that each concept, by itself, is perfectly predictable—but only if the other two are known. For example, one can calculate the resistance of a circuit if one knows the voltage and the current. The high level of systemic structure makes the theory highly useful in practical application.

Importantly, therefore, we may say that one emergent property of theory is the usefulness of that theory for understanding and engaging physical world phenomena.

Figure 6 Ohm’s Law as a practical map (Wright & Wallis, 2019: 152)

With such a highly systemic theory (supported by sufficient data and applied in relevant situations) surprising or unanticipated outcomes will be minimal or nonexistent for the concepts included within the theory. Over the long run, however, the unanticipated outcomes are much more substantial. Because the theories are so useful in practical application, engineers are able to design computers, cell phones, cars, planes, and the plethora of technology that surrounds us. Those vast revolutions in technology and lifestyle emerged as a result of applying the many highly structured conceptual systems to change physical systems.

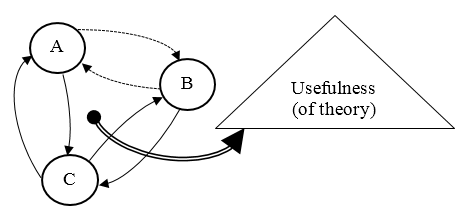

It is important to note that “Emergent outcomes, by definition, occur at a different level of a system than the level in which the original interaction occurred” (Westhorp, 2012: 411, emphasis added). Because current, resistance, and voltage all appear to be at the same level, we can understand emergence as something happening on a different level; represented diagrammatically in Figure 7.

Figure 7 Abstract example of highly systemic theory with one emergent property (usefulness) shown

Where objective research may lead to highly systemic theories such as Ohm’s Law, there is also a subjective component about the context in which the theory may be applied. “We can combine the benefits of objective study from the modern era with the benefits of subjective study from the postmodern era by adopting a systems approach ” (Laszlo & Laszlo, 2009: 7).

To summarize, there are two different mechanisms at work here. The short-term predictable and the long-term emergence. Where predictability may be thought of as a function within the interacting concepts at a single ontological level, emergence occurs between concepts at one level and results in something at a different ontological level.

We may see concepts with causal connections between them as being ontologically similar; perhaps on some scale of abstraction (Wallis, 2014a) and/or some scale of orthogonality (Wallis, 2020d). While, in contrast, the properties/things/concepts/components that are emergent seem to be on ontologically different level than the concepts from which they emerged.

This perspective suggests a scale of emergence roughly analogous to the one proposed by Page (and generally accepted by SEBOK). At the lowest end, a collection of concepts at varying levels on some scale of abstraction and/or orthogonality. Using such conceptual systems as knowledge is unlikely to result in predictable outcomes and similarly shows little opportunity for emergence. At the highest end, we would see the most emergence from a set of concepts, all closer to the same level of abstraction, orthogonal from one another, transformative (concatenated from other concepts in the theory) and all connected through causal loops.

While the present example has identified one concept (usefulness) as an emergent property, there may be other concepts emerging. The key point of this preliminary investigation is to suggest that theories should be constructed of concepts with clear delineation between levels on two dimensions. The horizontal dimension having concepts of the same ontological level linked with causal connections; and the vertical dimension having concepts of differing ontological levels linked with connections representing relationships related to emergence; it is also worth noting that the higher level concept emerges only from a highly systemic theory as measured using IPA (cf. Wallis & Wright, 2019), not from a single or sets of disconnected conceptual or components (as may be seen in lower level “types” in the above section).

This is an important perspective for developing better theories because, “…in an absolute sense, the emergent products do not exist as such. They do, however, exist in the alternative (although deeply—complexity—related) macro-level description…” (Richardson, 2007: 7). That is to say, emergence seems to occur at every level. “Indeed, in SoSPT the entire range of observed natural systems from galaxies to societies are linked sets of hierarchical levels portrayed in an unbroken series of emergence events” (Friendshuh & Troncale, 2012: 14).

In short, anything and everything may be viewed as emergent. Parts of theories along with concepts and logical inferences, all exist in our minds and the literature as a jumbled interacting mass, from which more systemic theories may occasionally emerge.

Perhaps; however, that does not mean we are doomed to be lost in a maelstrom of relative realities. It is still possible, and even desirable, to assign levels based on our perspectives. Importantly, however, we must recall that it is our understanding of emergence and ontological perspectives that we are concerned with here.

If we accept (tentatively, awaiting additional research and empirical validation) that we can avoid the conundrum of infinite regress in attempting to find some foundational perspective, that it is not “turtles all the way down” (cf. Meulenberg-Buskens, 1997) but instead, it is emergence all the way down, we can stop being distracted by “the turtles” and find a better focus for improving our theories.

Whether something appears as an emergent phenomena, or not, depends on one’s perspective. For conceptual systems, that may become more confusing as one theory may contain many perspectives—raising the need for very careful theory building to ensure that each theory stays on its appropriate level of emergence by focusing only on those perspectives that are of that same level of emergence (same ontological level).

The ability to clarify emergent properties from a systems theoretic perspective helps to resolve the tricky business of understanding our world because we don’t always know what we are looking at. For example, one might look at a group of people who work together and say it is a “team.” However, in some views of systems thinking, one might “draw a line” around anything and call it a system. So, calling a certain group of people a team is a kind of “shorthand” for all that is understood to be (and, quite possibly, not understood to be) a team. For example, according to https://en.wikipedia.org/wiki/Team “As defined by Professor Leigh Thompson of the Kellogg School of Management, "[a] team is a group of people who are interdependent with respect to information, resources, and skills and who seek to combine their efforts to achieve a common goal".1 Yet, when we look at a group of people we do not see that interdependence, their resources, or skills. So, instead, it might be more useful to think of “team” as an emergent property of those interdependent people.

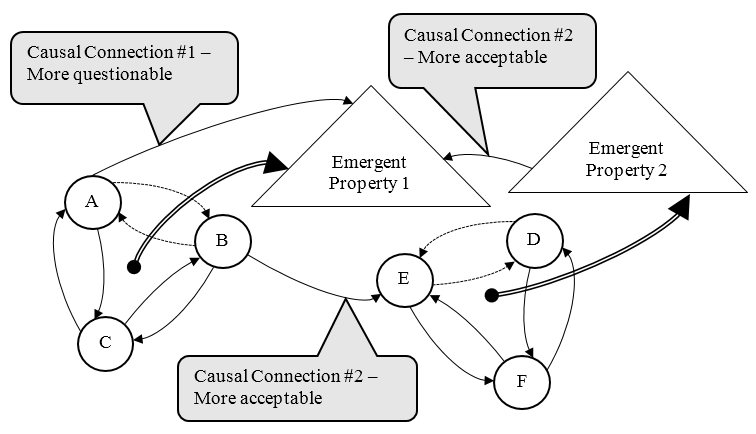

For a hypothetical example, in Figure 8, we might imagine that concepts A, B, and C represent Number of People, Interdependency, and Resources—all causally interacting in a way that results in the emergence of Team (or, perhaps, “team-ness”) as Emergent Property 1.

The insights in this section suggest new standards for representing theories. Such standards are needed to provide greater clarity, replacing the many ad-hoc ways diagrams have been presented. Here, we draw a distinction instead between connection #1 and #2 presented in Figure 8 and tentatively suggest that theories should include only causal connections within ontological levels and only emergent connections between ontological levels.

Figure 8 Correct and misleading representations of causal connections within and between levels/scales of emergence

In keeping with our understanding of a highly complex universe it may be noted that an individual concept does not emerge from another individual concept; rather emergence occurs from the interaction of multiple concepts. Although, it is certainly possible (indeed, seems quite likely) that multiple concepts may emerge from multiple concepts. The difficulty lies in sorting them out in a way that their causal and emergence relationships provide us with useful theories for practical application.

Diagramming Conventions

To more clearly communicate the understandings of scholars in an increasingly fragmented field, a set of standards is suggested here. Naturally, this is only a starting point; but one that scholars, practitioners, and editors might discuss at conferences, eventually leading to general agreement for the advancement of the field.

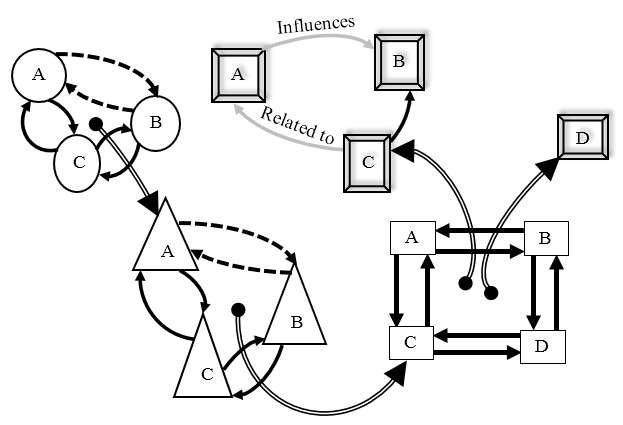

As Figure 9 shows, the shape of each conceptual component of a diagram might be changed to indicate its level on some scale of emergence. Here, triangles are seen as emergent properties of circles, rectangles as seen as emergent properties of triangles, and 3-D rectangles are seen as emerging from 2-D rectangles. Lines (with arrows indicating direction) may be shown as:

- Solid = “causes more” (may also have a “+” sign at head of arrow);

- Dashed = “causes less” (may also have a “-” sign at head of arrow);

- Double = “emergence”;

- Shaded (with descriptive label) = non-causal relationship.

Figure 9 Proposed norm for representing four levels of emergence within a single diagram

The insights presented in this section offer fundamentally new thinking about the phenomenon of emergence with profound implications for improving our conceptual systems and accelerating our science to better understand and address the many problems facing our planet and our civilization.

As suggested by the typology developed in the previous section, emergence is not the only way we can recognize levels of difference. In the next section, we will delve into a perspectival approach to understanding levels in conceptual systems.

Levels of Perspective

The notion of perspective has been deeply explored in the literature across multiple disciplines. Common wisdom across those perspectives on perspectives seems to suggest that we should accept that there are many perspectives, each one important to some stakeholders, and that communities of stakeholders should strive to find common ground through techniques such as dialog and “dot voting.” As those topics are adequately explored elsewhere, the present paper instead strives to find new ways of understanding perspectives by looking at the structure of our conceptual systems.

Diagrammatically, let us begin with the idea that a theory may be represented by boxes (for concepts) and arrows (for causal connections). That theory represents some situation that is shared by different stakeholder groups. Each stakeholder group might look at that theory from a different perspective; and, from each unique perspective, see their priorities as the parts of the whole that are perspectivally closer (Wallis, 2020d). Indeed, some concepts may appear so close and large that other concepts may appear to be “hidden” behind them. Thus, much as some stories or narratives, some parts of the theory are emphasized while others are de-emphasized. The same may also be true of causal connections between the concepts; where some connections are concealed and others emphasized depending on the viewers’ perspectives.

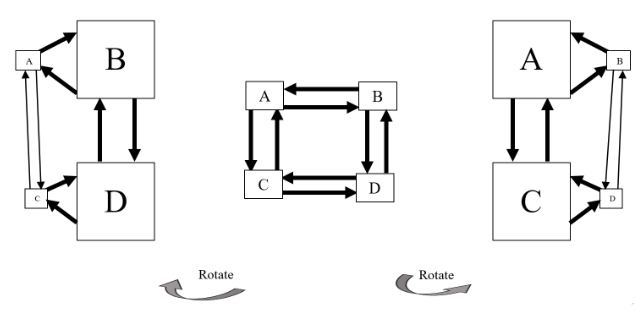

For a diagrammatic example, please consider Figure 10 where the central diagram represents the complete view of some situation, and the diagrams on either side represent the views of different stakeholder groups operating at different levels of the overall society.

Figure 10 One theory, rotated to emphasize different perspectives and so concepts - from (Wallis, 2020d)

The diagram on the left of Figure 10, we might say, represents the view of the BD group while the one on the right represents the AC group. Each prioritizes their own while minimizing the views of the other. Now, let us re-orient the diagrams further.

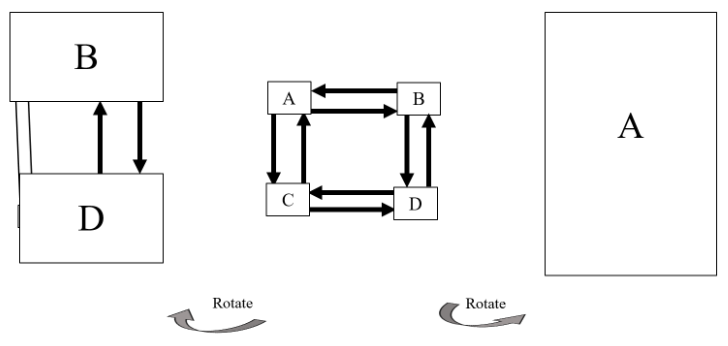

Figure 11 Conceptual rotation of a single model to represent sub-groups’ increasingly narrow perspectives and so foci.

Figure 11 suggests that the BD group is so focused two concepts that they have lost sight of the others. Except, in the diagram on the left of Figure 11, for a slight hint that there may be another concept. There are also connections (thin lines) but they are not seen as causal arrows. Again, that knowledge is hidden. The A group (on the right hand side of Figure 11) is so focused on their one idea/goal/concept that they see nothing else. This kind of simple model suggests a kind of imperviance (Wallis & Valentinov, 2016a) to thinking that may be overcome only with difficulty.

Operationally, this suggests a difficulty with the common approach to prioritizing where organizational participants brainstorm ideas, then use dot voting to determine where to focus their collective efforts. Such an approach may help focus the group on a few concepts (perhaps the group’s goals), but that seems reductionist because it is done at the cost of concealing other concepts and causal connections. This, in turn, suggests that a better approach to developing and clarifying knowledge would be to use causal knowledge mapping which would include a kind of gap analysis (Wright & Wallis, 2019: 162) to develop interdependent goals.

This view of views takes on a particular importance when attempting to discern causal relationships between concepts. For example, looking at Figure 12, from the perspective of concept A, concepts B and C may be seen as one level (or causal link) removed from concept A; while concept D is two levels removed.

Consider a stakeholder group for whom A is perspectivally near and D is far. So far, that they do not see or recognize D (that lack of recognition represented by shading). Nor do they recognize the causal links directly related to D (B↔D and C↔D). So, instead of an accurate understanding, the stakeholder group may incorrectly infer that other causal connections exist because their interactive effects on visible levels (B, C) as indicated by the shaded dashed arrows. Naturally, such inferences are likely to be inaccurate because A does not have data about D, nor does A understand the causal logical connections between D and other components. Thus, A “fills in” the blank spot on the map with assumptions of causal connections.

Figure 12 Inferred causal connections (dashed arrows) based on lack of understanding of actual causal connections

Such a “knowledge fog” may apply also (perhaps more so) to differences between ontological levels of emergence. So much so, that with sufficient distance, events may appear random or unpredictable.

Thus, we have reductionist thinkers (e.g., Kim, 1993) striving to explain the mind as reducible to the body because they are using only causal connections to explain connections that would more be more appropriately explained with connections representing emergence.

For a more concrete example, one stakeholder group may fill a gap in understanding with the idea that deities are causing lightning (instead of naturally occurring atmospheric conditions). Their mistaken assumption may, in turn, lead to behaviors that are counter-productive (e.g., performing ritual sacrifices to appease some deity instead of installing lightning rods). This perspective on perspectives has profound implications for policy, planning, knowledge use, and decision making.

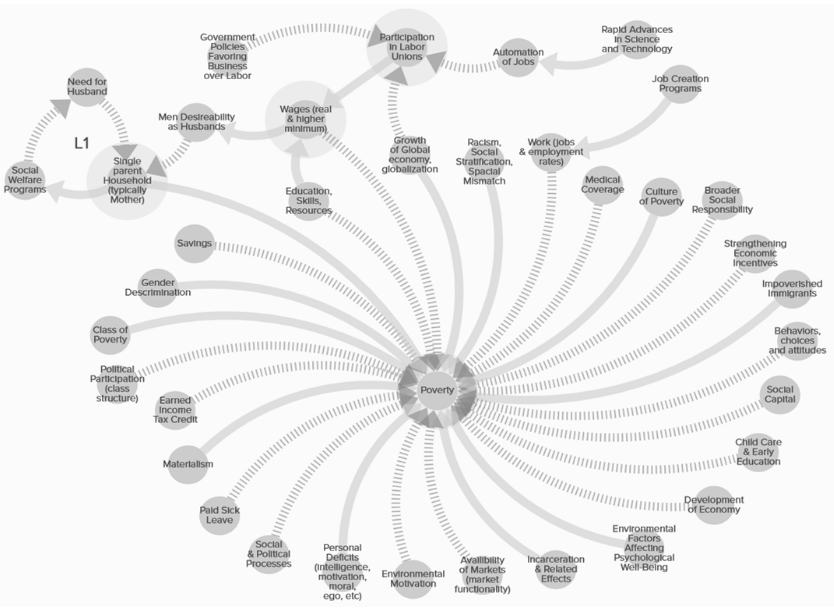

For a more practical example, Figure 13 shows ten theories of poverty synthesized into one (Wallis & Wright, 2019). This represents a shared understanding of poverty according to the ten stakeholder groups (from five academic disciplines and five organizations across the political spectrum).

Figure 13 Interdisciplinary synthesis of 10 theories of poverty (Wallis & Wright, 2019). To read details more clearly, please visit: https://kumu.io/Steve/caps2018

However, in contrast, the view from only one of those groups may be seen in Figure 14.

Figure 14 Understanding of poverty from one stakeholder group’s perspective (concepts and connections are also in Figure 13)

Believing that the problem is fully understood, this group (with their single stakeholder perspective) may begin to take action on the problem of poverty. They may also argue against the actions of other stakeholder groups believing that the other groups have misrepresented the situation. In contrast to either view or all views, the present paper along with studies on structure cited through this paper suggests a range of actions to develop more effective theories/policy models as conceptual systems to enable more successfully addressing the issue and collaborating with other stakeholder groups.

Hutchins (1995) uses the term “complexity shifting” to describe how a group shifts the difficulty or complexity of a problem to an expert (for whom the problem appears less complex) or to a tool (that may be used to resolve the problem more easily). By using the techniques as discussed in previous sections, we are shifting the complexity of our theories to better address seemingly impenetrable problems of society and policy. These techniques may also suggest how we improperly reduce our cognitive load (in the short run) by ignoring theory and making decisions based on limited “moral” perspectives, simplistic theories, false assumptions, or even random choice.

Such improper reductions, however, are unlikely to result in desirable situations; they are not sustainable (Wallis & Valentinov, 2016a, 2016b, 2017). The view of Figure 14 is only about 10% of the view of Figure 13 reflecting a much more limited understanding of the poverty problem. In short, while perspective-based approaches to identifying levels (e.g., listing priorities or describing hierarchies) may have some benefit for improved understanding, they are as likely to conceal as reveal.

This perspective on perspectives emphasizes the need to include multiple stakeholder views when developing theories, policies, strategies, and other conceptual systems. This relates to dual descriptions (Bateson, 1979) and conversations to build a socially constructed reality (Dent & Umpleby, 1998). This is important because it is the observer who determines the complexity of the system (Zelmer, Allen, & Kesseboehmer, 2006). Or, to put it another way, “A ‘system’ is a set of variables selected by an observer” (Umpleby, 2009: 4). So, it may be more accurate to state that observers, consciously and/or unconsciously through their perspectives, determine the appearance of complexity.

Those differing views may lead to conflict. Especially if the views represent simple conceptual systems. For example, two groups in the same nation may each insist that their shared nation move toward a goal that excludes the other group’s goal; thus leading to conflict.

Adding perspectives to expand the number of concepts in the theory stands in contrast to “normal science,” which tends to suggest investigating the fewest alternatives with an eye to choosing “the best” one. A systems/structural perspective in contrast, suggests expanding the shared model. So, instead of looking at a simplistic model of two concepts (A, B) and pushing to a choice of “A or B,” we should seek to improve the model by adding C which may be a direction that will benefit more levels of society (Beckmann, Hielscher, & Pies, 2014; Hielscher, Pies, & Valentinov, 2012).

This diagrammatic approach to understanding perspectives, and how those perspectives affect our understanding across and between levels of conceptual and social systems, may be useful for consultants and other change agents in explaining to their clients how we often do not understand the limits of our own understanding.

Before moving on, it is worth stressing some key concepts from this section. While all perspectives may be useful to some extent, they (as conceptual systems like theories, models, strategic plans, etc.) should be thought of as starting points for further exploration. Adding more concepts to those perspectives will improve them when the concepts are of the same ontological level and when causal connections between the concepts are identified. More importantly for this new perspective on emergence, concepts on differing ontological levels should be linked with connections showing emergence; however, such connections are more valid when they are understood as emerging from a highly systemic conceptual system as measured using IPA. This process, applied with a reasonable amount of rigor and careful consideration seems likely to impel our field to new ways of thinking and set the stage for accelerating the advance of science.

Future Directions

As this paper is somewhat exploratory in nature, it is as much about the future as the past. While the limitations of the human mind are insurmountable (Wallis & Valentinov, 2016b), we may expand those limits through the use of theories, computers, and collaboration. However, those also have limits. But by understanding our limits we may learn to exceed them by gaining additional, perhaps orthogonal, insights (Pies, 2016; Wallis, 2020d). In this section, we will identify a few directions for future research and practice.

Generally, scholars interested in the various methods of mapping (e.g., concept maps, mind maps, causal maps) should strive to develop quality standards for their respective methods (cf. Wexler, 2001) so users may differentiate between high and low quality maps within each method. This, in turn, will support comparisons and improvements between methods. It may be, for example, that a high quality concept map (with descriptive, rather than causal, connections between the concepts) may prove to be more useful to planners than a low quality casual map (which includes only causal connections). These kinds of efforts will support continual improvement of all methods and the advancement of the field.

Importantly, for synthesizing theories, this paper suggests that we should first strive to identify concepts at each level of emergence, then, link concepts into theories according to their levels of emergence, rather than try to force-fit concepts between levels.

The existence of levels suggests that it may be worthwhile to measure the distance between levels according to one or more ways suggested here (e.g., emergence, transformation, time). Such a measure may be useful in developing a periodic table of theories (Feuer, 1995; Wallis, 2014c) to further support improvements in theory and practice. The most basic approach would be to measure or quantify the levels between concepts within theories; this may be considered an IPAx (experimental technique within the larger family of Integrative Propositional Analysis methods) approach based on system dynamics.

For example, in Figure 2, C may be seen as being four causal steps removed from D suggesting some measure of structure for the theory overall. In contrast, F4 is only two causal steps removed from several other concepts—suggesting higher structural cohesion. However, F3 and F4 have no causal connections between them—suggesting a lack of structural closeness. In greater contrast, in Ohm’s Law (Figure 6) each concept is only one causal step away from each of the other two concepts.

We may anticipate that as theories evolve from lesser to greater usefulness that there will be fewer steps between the concepts (which implies also that a greater percentage of the concepts will be connected via causal pathways). Such a measure may serve as an indicator of the completeness of the theory and so guide scholars toward the development of better theory and guide practitioners and professors in the choice of more useful theories. Similar approaches may be developed to evaluate the range of vertical levels of emergence contained in the theory. And, both may be used someday to develop a periodic table of theories or perhaps some algebra of emergence.

Another important advance to be considered is the automation of these processes for evaluating and advancing theory. While current artificial intelligence technology can support coding of texts, it has not yet reached the level where in can replace the need for human sense-making. If peer reviewed papers could be analyzed by an artificial intelligence, the propositions clearly delineated, and the results then run through the process of IPA and IPAx, we may advance the social/behavioral sciences at an astonishingly rapid pace with attendant benefits for humanity.

Also, this structural view hints at a new approach for finding and/or managing “unknown unknowns” (cf. Stefano, Camillo, & Riccardo, 2014). Because, practically speaking, something that is unknowable to one person may be known to another. So, by looking at the systemic structure of each level, and determining the emergence of each level, we may see where something is “missing” from the diagram (and, at what level of emergence it is missing from, and so where to look to find it). Thus the practice of gap analysis (noted in above sections) may be applied to evaluate and understand what is missing between ontological levels of emergence in much the same way that it is now applied to understand what is missing (and so to suggest the opportunity for research) between concepts within the same ontological level un-connected by causal linkages.

Future investigations may suggest changes to our understanding of conceptual sub-structures. That is, emergence may be thought of as a sixth “logic structure” in addition to the existing five (atomistic, linear, branching, concatenated, and circular) (Wallis, 2016b). A seventh structure may be seen as existing (set, in some sense, between atomistic and linear), where multiple concepts exist, but are connected with non-causal arrows.

A “static” theory (where a “snapshot” of a theory is captured on a page) may be combined with “dynamic” theories of action (e.g., action research) even though the dynamic theory may also be a snapshot on a page. This may result in a world-bridging metatheory where iterations of intertwined theories and their related activities are developed. Those metatheories may pave the way for a much deeper level of understanding—where iterations of activities change the theoretical “rules” of iterations.

Unaddressed here, but worthy of additional research, is the idea of downward causation (cf. Emmeche, Køppe, & Stjernfelt, 2000). What affects do emergent properties have on submergent properties? Might the emergent concepts affect the submergent concepts as a whole system? Individual components? The causal connections between them? Much remains to be explored.

Returning our focus to causal closure, (Kineman, 2012), in discussing Rosen’s perspectives, notes that a key question to consider relates to what might be considered as inside compared to what is outside the system. With a physical structure, it is relatively easy to decide who is inside or outside a building. With the fragmented nature of our conceptual ecology, however, the difference is not always so clear (for example, in the above typology, “fuzzy” conceptual systems. Much like a pile of boards does not have an inside or outside in the same way that a house has, we can see what is in or out of a conceptual system only when it has a high level of structural closure. As such, we may use IPA (cf. Wallis & Wright, 2019) to measure “how structured” and therefore “how closed” a conceptual system is.

For example, in Figure 2, the nested map has an IPA Systemicity (measure of causal logic structure) of 0.27 (two concatenated concepts divided by seven total concepts) so may be said to be 27% closed (or, conversely, 73% open). The high level map is 50% closed, while Figure 6 is 100% closed. The goal here is not to close the conversation; rather, to move it forward by providing new tools and insights.

Measuring closure, at least in the realm of conceptual systems, provides a new technique for pushing our thinking to new and more productive directions for addressing such deep philosophical issues as conceptual closure and the mind-body problem. Especially as moving toward closure on one dimension pushes us toward emergence on another. It is not sufficient to claim that the mind emerges from the body. Such a presentation is too sparse to be of much use. Instead, enough concepts and causal connections for the physical must be provided to justify a claim of emergence. In short, this approach will help us to understand the role of emergence in conceptual systems as a path to developing better representations of our physical systems.

This is also a step forward toward and (perhaps) a resolution of the micro-macro problem (Alexander et al., 1987). That problem is presented in the Sorites paradox, or the paradox of the heap. From one perspective, we may ask, “If you have a heap of sand, and remove one grain of sane at a time, when does the heap stop being a heap?” Or, we may ask, “How many individual grains of rice are required in order to have a heap of rice?” While there are many ways to parse the answer from a component perspective, another way to think of it is as a problem of understanding emergence—or emergent dimension properties. Or, to say it in another way, grains and heaps are on different ontological levels. They are of different universes and so cannot be measured with the same yardstick. One might measure the number of grains by counting them, or the number of heaps by counting them; but heaps may also be measured according to their height and the slope of their sides (which is determined in part by the material of which the heap is made). Otherwise, one might just as well ask, “How many two dimensional squares can you fit into a three dimensional box?” Such a question is meaningless (and, worse, useless) unless you understand the three dimensional construct as an emergent property of two dimensional constructs interacting or connected at right angles.

Some differences may be separated by distance while other may be separated by time in a complex multilayered way (Tateo & Valsiner, 2015). Awareness of a “time horizon” for connectedness of systems seems to be important as we attempt to address simple problems (e.g., the operation of a thermostat) and more complex problems (e.g., global social evolution, advances in technology, and such). Some theories, particularly statistical maps, seem to suggest that causal change occurs instantaneously. That may seem to be the case for some theories/laws of physics; however, at the human/social scale very little or nothing happens that quickly! Other theories, particularly some computer models, have explicitly built-in time scales to show how much time must pass between an action and a result. Given that there are some unexpected outcomes that occur within moments while others emerge over centuries, the study of time differences does not merely “call for more study,” it practically screams.

It seems difficult to see the trees (as a submergent property) and the forest (emergent property), at the same time. Human individuals have difficulty comprehending the world more than one or two levels removed from their immediate perspectives. From a perspectival view, the CEO has little understanding of the daily knowledge required and deployed by the line workers. The voter has little understanding of candidates for public office. This has profound implications for the developing a just society (Wallis & Valentinov, 2016b). The present paper suggests that there are significant implications for working with maps of different stakeholder groups. For example, using a map made in the board room may create confusion if it is used as a reference for communicating with a supervisor working in the boiler room. When each level map is more (someday, completely) systemic, so that each level is rigorously understood as emerging from the other (not only in some fuzzy metaphorical sense, but actually, objectively verified as such) we will be able to more effectively communicate between levels of human organizations. The implications raised here might be explored in relationships to levels of emergence within and between social/organizational systems. Imagine, for example, an organizational chart based on levels of emergence, rather than a hierarchy of power and (supposed) control? A challenging notion!

Scholars often have addressed, developed, and presented theories in the form of text. Sometimes confusing, that process may support the process of “cherry picking” (Wallis, 2016b) and lead to moral conflicts as scholars argue over small pieces of the puzzle pulled from multiple larger texts. We must be careful not to confuse the piece with the puzzle. Instead, our field should purposefully move beyond the postmodern perspective of generating ever-increasing number of concepts and theories, and move instead toward synthesizing those theories. If we are to bring unity to the many disciplines and fields of science, we may want to begin with our own.

The above summaries, contributions, reflections, insights, and conversations suggest a scale of relative usefulness for conceptual systems and the diagrammatic maps that represent them. The lowest level is a set of concepts which are poorly understood ontologically. There would be little or no empirical connection or “correspondence” between each of the concepts and something that is measurable or measured in the physical world. For example, one might say that there is an “essence” of a cat. Without a measure, “essence” is at the lowest level of understanding or practical usefulness. The second level, and slightly more useful, is one where there are measurable concepts but having few or no connections between them. For example, a list of terms (e.g., cat, mouse). The third level would be a set of concepts with conceptual connections between them. For example “cats chase mice.” Here, “chase” is the conceptual connection between the two actors. Fourth, are theories with measurable concepts having causal connections between them. For example, “Having more cats will cause there to be fewer mice). At the fifth level, we have concepts at differing ontological levels (with some causal connections between concepts at the same level). The sixth, and perhaps highest level, are those conceptual systems which include measurable concepts, causal connections, and emergent connections to concepts of differing ontological levels. For example where, “The interaction between cats and mice will lead to their co-evolution.” Here, coevolution is the emergent property.

From a structural perspective, that is for developing theories of improved structure for improved usefulness in practical application, there now exists a range of techniques within the broader structural methodology. In that methodology, Integrative Propositional Analysis (IPA) may be used to evaluate the Complexity (that is “simple complexity,” complicatedness, or the number of concepts within the theory) and Systemicity (measure of systemic structure) of theories (Wallis, 2016a) and to integrate/synthesize theories within and between disciplines (Wallis, 2014b, 2020c) with objectivity and rigor.

Theoretical models may also be evaluated to improve the level of relative abstraction between concepts (Wallis, 2014a) and to identify the core and belt of theories (Wallis, 2014c) along with the sub-structures of concepts which may be used to show what parts of the theory may be more useful in application and which require more research (Wallis, 2016b). Another technique is to identify and increase the number of loops within each theory and increase the percentage of concepts included in those loops (Wallis, 2019). Additionally, concepts within conceptual systems should be evaluated and revised to improve the orthogonality between them (Wallis, 2020d). The present paper adds to this suite of tools the idea that we may evaluate, separate, and measure the levels between causally and emergently connected concepts to better understand and advance theory.

It is worth mentioning that as each technique is applied, it may change that theory’s rating/score according to one or more other techniques. Therefore, scholars should cycle between these techniques; along with improving theory through the other two (non-structural) dimensions of knowledge by improving the quantity and quality of the supporting data and the relevance or meaning to the users of the theory (Wright & Wallis, 2019).

Conclusion

In the world of physical (including social) systems it is easy to apply labels—to claim that we have identified important levels of difference. However, it is sometimes difficult to see the value of those levels and to adjudicate between conflicting claims (e.g., the benefits of flat vs. hierarchical organizational structures) because of our inescapable biases and because we have not identified immutable “laws” of the social world. The conceptual world offers an abstract snapshot, frozen in time, and governed by the fewest possible rules which themselves are based in well-founded assumptions of systems thinking, as a way to re-think and so better understand our physical world.

Generally, by using the above structural techniques to understand, evaluate, synthesize, and improve conceptual systems, scholars may make more rapid progress in developing theories that are more useful in practical application. Such progress is necessary for overcoming our individual and collective ignorance (Wallis & Valentinov, 2016a) and developing more sustainable theories (Wallis & Valentinov, 2017) that are more easily modeled for experimentation and insight (Wallis & Johnson, 2018) for a more just (Wallis & Valentinov, 2016b) and sustainable world within and beyond our organizations (Wallis, 2020b).

Understanding causality, emergence, and perspectives through diagrams of theory provides benefits to those working at the highest level of human aspiration. For example, the idea that, “Liberty consists of being able to do anything that does not harm others: thus, the exercise of the natural rights of every man or woman has no bounds other than those that guarantee other members of society the enjoyment of these same rights.” (The Declaration of the Rights of Man and of the Citizen of 1789, cited in Fink, 2019). However, even well-intentioned individuals may harm others out of ignorance. That ignorance is a lack of knowledge and a function of the levels of that knowledge. That is to say, if one’s theory has gaps, has levels where something is missing, it may be suggested that the person should strive to develop a better theory to inform their action lest they cause unintentional harm instead of optimizing their ability to generate sustainable and purposeful good.