Chapter 6: The Structure of Theory and the Structure of Scientific Revolutions—What Constitutes an Advance in Theory?

Wallis, S. E. (2010). The structure of theory and the structure of scientific revolutions: What constitutes an advance in theory? In S. E. Wallis (Ed.), Cybernetics and systems theory in management: Views, tools, and advancements (pp. 151-174). Hershey, PA: IGI Global.

Abstract

From a Kuhnian perspective, a paradigmatic revolution in management science will significantly improve our understanding of the business world and show practitioners (including managers and consultants) how to become much more effective. Without an objective measure of revolution, however, the door is open for spurious claims of revolutionary advance. Such claims cause confusion among scholars and practitioners and reduce the legitimacy of university management programs.

Metatheoretical methods, based on insights from systems theory, provide new tools for analyzing the structure of theory. Propositional analysis is one such method that may be applied to objectively quantify the formal robustness of management theory. In this chapter, I use propositional analysis to analyze different versions of a theory as it evolves across 1,500 years of history. This analysis shows how the increasing robustness of theory anticipates the arrival of revolution and suggests an innovative and effective way for scholars and practitioners to develop and evaluate theories of management.

Introduction

As scholars, we seek to improve our understanding of management practices. An important part of this process is how we advance our theories. While an advance in understanding might be understood as relating to individual perception, advances in theory relate to the development of formal structures that are communicable, testable, and useable across our discipline. The question of what actually constitutes an advance in theory is still open, and new answers to that question are only now emerging. For example, it has been claimed that a theory of greater complexity should be considered as one that is more advanced (Ross & Glock-Grueneich, 2008). Another approach claims that improved theories are those that combine multiple theoretical lenses (Edwards & Volkmann, 2008). Still another approach suggests that theories of greater structure may be considered more advanced (Wallis, 2008b).

For scholars outside this growing metatheoretical conversation, the standard method for advancing a theory is to determine if that theory works in practice. However, each theorist seems to claim that his or her theory is best, so this is not a very useful measure. Investigating the Faust-Meehl Strong Hypothesis for Cliometric Metatheoretical investigations, Meehl notes that many authors claim their theories are good because they are parsimonious. However, Meehl (2002: 345) notes, this claim is misused, and represents a weak claim for successful theory.

Popper (2002) suggests that the best theories are those that are falsified. Yet, this level of testing seems to represent too high a hurdle for social scientists (Wallis, 2008e). Few theorists even attempt to falsify their own theories, or encourage others to do so. Some authors, in claiming that they have developed an advanced theory, invoke the spirit of Thomas Kuhn and his description and discussion of paradigmatic revolution.

Drawing on centuries of hindsight, Kuhn (1970) developed the idea of scientific paradigms; each of which includes laws, theories, application and instruments which combine to support “coherent traditions of scientific research” (Kuhn, 1970: 10). A paradigmatic revolution is said to occur when the traditions of a science change in significant ways. For example, moving from the Ptolemaic view of the solar system (where the Earth is at the center, surrounded by nested crystal spheres on which are embedded stars, planets, etc.) to a Copernican view where the sun is at the center. Revolutions also result in major improvements to the effectiveness of practitioners. With modern physics, it is possible to have communication satellites, while under the Ptolemaic paradigm, no such achievement would be possible.

Some authors in the field of management claim that their theories are not only effective and useful, but have achieved the status of paradigmatic revolution—ushering in a new age of management, presumably as great as the shift in thinking between Ptolemy and Copernicus. For example, after the development of Total Quality Management (TQM) by Ishikawa, a Kuhnian revolution was claimed. It was argued of TQM that, “All of these characteristics and underlying philosophies point to fundamental changes in the rules of business--a paradigm shift” (Amsden, Ferratt, & Amsden, 1996). While some authors claim revolution, others lend legitimacy to such claims. For example, Clarke & Clegg (2000: 45) refer to a proliferation of paradigms and describe over twenty publications that claim significant paradigmatic changes. They closely investigate some claims of paradigmatic revolution including, “Transition From Industrial To Information Age Organization.” On the other hand, some authors are content to strongly imply a revolution, as would be found in a shift toward more spiritual management practices (Steingard, 2005). Still others do not make such claims, but explicitly seek revolution in their field (e.g., Stapleton & Murphy, 2003).

The nature of these claims seems to suggest that management science, as with the broader social sciences, does not have a shared understanding of what constitutes a Kuhnian revolution, or even the advance in theory needed for such a revolution. This lack of advance is reflected in management studies where the field is disparaged as being fragmented (Donaldson, 1995) by academics and where practitioners have little interest in the theories of academia (Pfeffer, 2007). In short, these “paradigm wars” lead to a loss of legitimacy from philosophers and practitioners (McKelvey, 2002).

The responsibility for these spurious claims may rest upon Kuhn’s shoulders. While he wrote convincingly his focus, “leaves largely intact the mystery of how science works” (Nickles, 2009). In a sense, Kuhn described that houses of theory were built, but did not describe the method of construction. We can say that Kuhn’s approach missed the mark in two important and closely related ways. The first was his focus on empirical data as a tool for advancing revolution. That focus, we will briefly explore in this section. Second, in looking at data, Kuhn missed the opportunity to focus on theory. That focus we will investigate (and remedy) in the remainder of this chapter.

For the first focus, Kuhn (1970) highlighted the idea that collecting facts is critical to the advancement of science. For example, he suggests that Coulomb’s success in developing a revolutionary theory of electrostatic attraction (EA) depended on the construction of a special apparatus to measure the force of electric charge (Kuhn, 1970: 28). Kuhn provides this kind of specific example for the development of empirical data, but does not provide close descriptions of how scientists used that data to develop their theories. This pursuit of the empirical is exemplified by Popper (2002: 113) who suggests that scientists should begin with relatively arbitrary propositions. Then, they should move to the more serious work of deductive testing and falsification. In his view, it seems that the development of theory is relegated to a secondary status, while objective analysis reigns supreme. This empirical approach has colored the social sciences from the outset—and for good reason.

Social scientists of the early 19th century might have experienced an appreciable envy of their counterparts in physics, who were then reveling in the newfound success of their science. It was as if social scientists had looked up one day to see their counterparts living in comfortable homes of brick—safe behind solid walls of useful theory—while the social scientists languished outside in the cold. Those early social theorists recognized the benefit of having solid walls, but were unsure about the process of building a house of theory. It must have seemed to them that the house of physics was built using factual bricks of empirical analysis. Comte, for example, is said to have developed theories using a positivist, or empirical approach (Ritzer, 2001). However, in the words of Poincaré, “…a collection of facts is no more a science than a heap of stones is a house” (Bartlett, 1992). When social scientists used that empirical approach, however, the results were disappointing. Instead of solid walls, they had only piles of bricks.

By the middle of the twentieth century, it was becoming clear that social theory was not very useful in practice (Appelbaum, 1970; Boudon, 1986). As a result, three general remedies emerged. One remedy was for scientists to focus on smaller scale systems (Lachmann, 1991: 285). This approach led to the development of organizational studies and management science, as found in the writings of Lewin, McGregor, and others (Weisbord, 1987). Another remedy focused on investigations that were essentially a-theoretical. These “epistemologies of practice” (Schön, 1991) suggested the need for investigation, reflection, and action instead of the act of creating formal theories (Burrell, 1997; Shotter, 2004). Finally, the failure of a social science based on empirical investigation prompted the call for still more empirical investigation—a call that continues today (e.g., Argyris, 2005).

Unfortunately, the results generated by those alternatives do not seem to be any more useful than their predecessors. For example, despite the popularity of these approaches, studies have shown that Total Quality Management (TQM) fails at least 70 percent of the time (MacIntosh & MacLean, 1999), organization development culture change efforts seem to fail about the same rate (Smith, 2003) and Business Process Engineering (BPR) should not be considered a viable approach (Dekkers, 2008). Other authors echo this concern. For example, Ghoshal (2005) suggests that management theory as taught in MBA programs is a contributing factor to serious issues such as the Enron collapse which leads to concerns about the viability of management science in academia (Shareef, 2007). The essential idea, that social scientists could engage in empirical observation and use the resulting data to create useful theories, appears to have been flawed.

Kuhn reported that houses of theory were built, and that they were built from empirical bricks, but he did not explain the process by which the bricks were assembled. If we are to understand how to build solid houses of theory, it seems that our focus should be directed toward understanding theory. Only by looking between the bricks can we learn how they are put together. Only by investigating how these houses of theory are assembled, regardless of what empirical building blocks are used, can we find how they are built, and how we may advance management theory.

In this chapter I will investigate one method for objectively measuring theory to ascertain if this method may be used as a path for the advancement of management theory. In a metaphorical sense, I will identify the previously unknown techniques of bricklayers responsible for the well-built house of physics and, from that perspective, suggest how management scientists might build solid houses of useful theory. In this, we will seek to answer Kuhn’s question, “Why should the enterprise sketched above move steadily ahead in ways that, say, art, political theory, or philosophy, does not?” (Kuhn, 1970: 160). Several perspectives may prove useful in this investigation. The first comes from developments in systems theory and the closely related field of complexity theory, specifically mutual causality—the idea that everything is interrelated. When applied with academic rigor, this idea of interrelatedness allows the objective analysis of the structure of theory.

Moving forward, we first review background information that identifies important similarities between theories of physics and theories of the social sciences. These similarities allow us to conduct analyses on one form of theory and draw inferences to another. Next, as part of understanding both forms of theory, we will look at the broader context of the growing conversation on metatheory, with a focus on the structure of theory, which may be analyzed in an objective way described in terms of formal robustness. That understanding of theory leads into the main thrust of the chapter where EA (electrostatic attraction) theory is analyzed in various forms as it evolved over time as it evolved over time—moving from antiquity (where the theory merely described curiosities), into modernity (where robust theories supported paradigmatic revolution). By comparing the developmental path of EA theory with the present structure of some theories of management, inferences about the present state of management theory can be made along with suggestions for accelerating the advancement of management theory toward more effective application in practice.

Background

The Common Ground Between Physical and Social Theories

The contrast between the physical sciences and the social sciences may framed in terms of complexity. In classical physics it is generally considered possible to develop predictive theories or laws to explain and forecast the workings of the natural universe because the physical universe is relatively stable and predictable. In contrast, complexity theory suggests that social systems exhibit inherent complexities, understood as non-linear dynamics (Olson & Eoyang, 2001; Wheatley, 1992). Therefore, in the social world, prediction (and the creation of predictive theory) is considered problematic or impossible. This point of view is not a strong one, however, because a statement such as, “It is not possible to create useful theories in social systems” is itself a theory that makes a prediction about a social system. The self-contradictory nature of that position renders it questionable. Therefore, we cannot rule out the possibility of predictive theories within the social sciences (Fiske & Shweder, 1986).

Moreover, the present chapter is not about theory creation, so many of these concerns may be set aside. The goal here is to measure the similarities between existing theories. This is an important distinction because the a-theoretical camp has not shown that theories of management cannot exist because, indeed, they do. They have only shown that within the current paradigm of management science we don’t have the ability to create effective theory, which is reasonable since the evidence shows we don’t. The validity of the analysis in this chapter rests on the similarity between theories of physics and management, leading us to ask: How might the two be compared?

The essential commonality between theories explored in the present chapter is found in the structure of the theory as seen in the interrelated nature of the propositions contained within the theory. Theories of physics and theories of management both contain interrelated propositions. For an example, physicist Georg Ohm developed the proposition (for a simple electrical circuit) that an increase in resistance and an increase in current would result in an increase in voltage. As an example from management theory, Bennet & Bennet (2004) suggest that (in an organization) more individuals and more interactions will result in more uncertainty. This similarity between propositional structures in theories of physics and theories of management provides a basis for comparison. Thus, structural inferences from one set of theories may be applied to another set of theories with some level of reliability.

Of course, other aspects are not held in common between theories of physics and theories of management—specifically where theories from each discipline are used to describe relationships among different things. For example, Newton’s laws describe the motion of planetary bodies in the context of our solar system, while management theory describes some relationships between humans in the context of the workplace. In this chapter we are focusing on the theories themselves, not the things described by those theories. Therefore, as demonstrated above, the comparison between structures of theories should hold true. Metaphorically, we are not trying to differentiate between the bricks of physics and the rough stones of management; we are looking at the mortar that can serve equally well to hold them all together.

Further, we are not testing the process by which the theories were created, such as the formal process of grounded theory (Glaser, 2002) or more intuitive methods (Mintzberg, 2005). Neither will we consider the falsifiability (Popper, 2002) of those theories per se, although this analysis does suggest some insights and opportunities for further investigations along those lines. Because this is essentially a metatheoretical investigation, we begin with a brief explication of metatheory.

Metatheory

In previous decades, the term metatheory meant the speculative construction of one theory from two or more theories (Ritzer, 2001). This understanding of metatheory is being superseded by scholars who use the term to describe investigations into the structure, function, and construction of theory (Wallis, 2008b, 2008e) as well as the more carefully considered construction of overarching theory (Edwards & Volkmann, 2008) and investigations into the validity of theory as related to the complexity of theory (Ross & Glock-Grueneich, 2008), as well as the investigations of other authors in the present volume.

The “theory of theory” or metatheoretical conversation draws on insights from Kuhn (1970), Popper (2002), and Ritzer (2001), as well as methodologies from Stinchcombe (1987), Dubin (1978), and Kaplan (1964). More recently, notable scholars such as Weick (2005), Van de Ven (2007), Starbuck (2003) and others have summarized our present understanding of theory development and called for a new look at theory. The goal of this conversation is to develop a better understanding of theory—including how theory is created, structured, tested, and applied—in order to engender better theories and support the development of improved applications.

Theory may be understood as, “an ordered set of assertions about a generic behavior of structure assumed to hold throughout a significantly broad range of specific instances” (Southerland, 1975: 9). Theory is of key importance to practitioners as, “practice is never theory-free” (Morgan, 1996: 377) and to scholars because “there are no facts independent of our theories” (Skinner, 1985: 10) (emphasis, theirs). While Burrell (1997) suggests that theory has failed, others call for better theory (e.g., Sutton & Staw, 1995). This investigation falls into the later camp. This topic is of critical importance to our field because, “What constitutes good, useful, or worthy theory in our field remains up in the air and cannot be resolved through empirical validation alone.” (Maanen, Sørensen, & Mitchell, 2007: 1153). This echoes Nonaka’s (2005) argument that the creation of theory requires more than the traditional, positivist approach of seeking objective facts.

While there has been a great deal of conversations around the creation of theory (e.g., K. G. Smith & Hitt, 2005) and the testing of theory (Lewis & Grimes, 1999; Popper, 2002), these conversations have not shown efficacy in advancing theory. Although, some academicians seem to imply that the creation of more theory is taken as a reasonable measure of success. For example, one web page notes that an accomplished professor emeritus, “…is the sole author of six books. He is author or co-author of over 100 papers” (GMU, 2009). While these are certainly impressive numbers, there was no mention of the value of the work or its application in the world—the value sits upon a shelf. Another popular method for determining accomplishment is counting citations. These methods (and others) might indicate some sort of popularity (Wallis, 2008a), but do not seem to indicate any way to advance a theory. Indeed, in a recent HERA study, the author admits that there does not seem to be any reliable method for evaluation (Dolan, 2007). Another approach is called for.

Structure of Theory

Kaplan (1964: 259-262) suggests six forms of structure for theoretical models. His forms of structure include a literary style (with an unfolding plot), academic style (exhibiting some attempt to be precise), eristic style (specific propositions and proofs), symbolic style (mathematical), postulational style (chains of logical derivation), and the formal style. The formal style avoids “reference to any specific empirical content” to focus instead on “the pattern of relationships.”

Dubin’s (1978) approach is similar, in that he suggests how theories of the highest “efficiency” are those that express “the rate of change in the values of one variable and the associated rate of change in the values of another variable” (Dubin, 1978: 110). Such relationships might be understood as structural in the sense that they represent causal and co-causal relationships between events. Because those relationships are well explained, we might expect such a theory to be more useful in practice, allowing the practitioner to use the theory to understand or predict those changes. In short, we might expect that theories with a higher level of structure to be more effective in practice.

Because theory indicates changes between multiple interrelated events, the structure of theory might be understood as a system. Briefly, the history of systems theory might be best seen in Hammond (2003) while the breadth of the theory might be best seen from the systemic perspective of Daneke (1999). The application of systems theory to management is provided in Stacey, Griffin, & Shaw (2000) while Steier (1991) draws on an understanding of cybernetics to explore reflexive research and social construction. More relevant to the present study, advances in complexity theory, systems theory, and cybernetics suggest that a systemic perspective might provide a useful lens for viewing management theory (e.g., Yolles, 2006).

The systems perspective may be applied to a conceptual system. In this case, the present methodology focuses on the systemic relationship between propositions in a body of theory measured in terms of “robustness” (Wallis, 2008b). It should be noted, by way of clarification, that the understanding and use of robustness as used here is different from a more common understanding, where robustness might be understood as strong, resistant to change, longevity, or widely distributed. Rather, robustness refers to the specific and objective measure of the relationship between propositions in a theory.

The idea of a robust theory comes from physics and mathematics, and represents a theory with complete internal integrity. For example, Ohm’s law of electricity (E=IR) is considered to be a robust theory because it is amenable to algebraic manipulation—that is to say, this formula is equally valid if written as I=E/R, which means it is equally valid whether it is used to find volts (E), amps (I), or resistance (R). When I undertook to understand the structure of theory, I drew on insights from dimensional analysis, systems theory, Hegelian dialectic, and Nietzsche’s insights into the co-definitional relationships between the dimensions of those dialectics. These, and other ideas, I combined to develop the process of Reflexive Dimensional Analysis (Wallis, 2006a, 2006b). That methodology was further refined (Wallis, 2008b) to develop a method of propositional analysis that could be used to objectively determine the robustness of a theory.

By way of background, a causal proposition describes a relationship between aspects—where each aspect relates to some observable or conceptual phenomena. For an abstract example, a proposition might be represented as, “A causes B” (or, “changes in A cause changes in B”). A co-causal proposition might be, “Changes in A and B cause changes in C.” Such co-causal propositions are described as concatenated (Kaplan, 1964; Van de Ven, 2007).

Van de Ven suggests that concatenated concepts may be difficult to justify and suggests, instead, that the more commonly used chain of logic is the better way to construct a theory. A chain of logic might be understood as explaining how changes in A are caused (or explained) by changes in B that are caused by changes in C. Conversely, Stinchcombe (1987), suggests that such a chain is less effective because any intermediary terms (B, in this abstract example) are redundant and so do not represent a useful addition to the theory. Further, any such chain must ultimately rest on some unspoken assumption. Extending the abstract example, the chain of steps (A, B, C, etc.) continues until the argument reaches a point where everyone agrees that some foundational claim is “true” (perhaps Z, in this case). However, insights developed from Argyris’ “ladder of inference” suggest that those underlying assumptions are not necessarily reliable guides (Senge, Kleiner, Roberts, Ross, & Smith, 1994: 242-243). In short, relying on unspoken assumptions may lead to folly as easily as wisdom.

The robustness of a theory may be objectively determined in a straightforward manner (for an in-depth example, see Wallis, 2008b). First, the body of theory is investigated to identify all clear propositions. Those propositions are then compared with one another to identify overlaps and redundant aspects are dropped. Second, the propositions are investigated for conceptual relatedness between the aspects described in the propositions. Those propositions that are causal in nature are conceptually linked with aspects of the theory that are resultant (each aspect may then be understood as a dimension representing a greater or lesser quantity of some aspect or phenomena). Those resultant aspects that are described by two or more causal aspects are understood to be concatenated and are considered to be more complex, more complete, and more useful than aspects that are not as complex, or as well structured.

Third, the number of concatenated aspects in the theory are divided by the total number of aspects in the theory to provide a ratio—a number between zero and one. This ratio is the robustness of the theory and represents the degree to which the theory is structured. A value of zero represents a theory with no robustness, as might be found in a bullet point list of concepts with no interrelationship between them. A theory with a value of one suggests a fully robust theory; an example is Ohm’s E=IR. Because of the successful application of robust theories in math and physics, it may be expected that a robust theory of the social science can be reliably applied in practice, and will be more easily falsifiable in the Popperian sense (Wallis, 2008c). Metaphorically, bricks that are directly mortared to other bricks would be highly robust (as found in a structured wall or home), while bricks that are scattered about would not be robust at all. A pile of bricks would be somewhere in the middle (as a pile might have slightly more structure than scattered bricks, though far less structure than a home).

For an abstract example of determining robustness, consider a theory of five aspects (A, B, C, D, and E), each representing differentiable concepts or phenomena. The causal relationships between these aspects are suggested by two propositions: (1) A causes B; and (2) More C and more D results in more E. Of these, only E is concatenated because there are two aspects of the theory that are causal to E. Therefore, the robustness of this theory is 0.20 (the result of one concatenated aspect divided by five total aspects).

This method of propositional analysis allows us to examine a theory and assign a relatively objective measure of that theory’s structure. With this method of measurement in hand, we can apply the yardstick of robustness to theories across time. And, importantly, we can determine if the robustness of the theory is increasing, decreasing, or merely wandering.

Forward to Revolution

According to Kuhn, a paradigmatic revolution is a situation where, “the older paradigm is replaced in whole or part by an incompatible new one” (Kuhn, 1970: 92). Kuhn also suggests that a scientific revolution occurs when a new paradigm emerges, one that provides a better explanation, answers more questions, and leaves fewer anomalies. Such a revolution in management is expected to bring more effective theories and practices to managers. However, Kuhn’s description of revolution is problematic because it does not describe how much better the explanation must be. Nor does it describe exactly what reduction in anomalies must occur for a paradigmatic change to be considered revolutionary. There is no method of measurement. This issue was highlighted recently when Sheard (2007) framed the conversation as a contrast between superficial and profound revolution and asked, “Who decides what is ‘profound’?” Yet, in his reflections, Sheard shied away from establishing a metric for delineating revolution stating that revolutions, “may be qualitatively sensed, but are not amenable to any ratio of distinction” (Sheard, 2007: 136).

This lack of distinction between superficiality and profundity may lead to spurious and conflicting claims for the existence of revolutionary change. Taking an example from social entrepreneurship theory, many authors agree that the act of social entrepreneurship is an important part of that theory (e.g., Austin, Stevenson, & Wei-Skillern, 2006; Bernier & Hafsi, 2007; de Leeuw, 1999; Guo, 2007; Mort, Weerawardena, & Carnegie, 2003). Yet, Fowler (2000) suggests that the focus is not so much the act of social entrepreneurship as it is the social value proposition created by that act. Is this difference between Fowler and others revolutionary? Following Kuhn’s example of historical evaluation, it is impossible to know without centuries of perspective; which, in turn, renders the concept of paradigmatic revolution useless for any conversation around contemporary issues. So scholars and practitioners continue to claim revolutionary improvement without any measure of what that means. In short, claims of revolutionary theory are unsupportable because there is no clear understanding of what constitutes a revolution.

While scholars earn their pay by arguing points such as these, managers are rewarded for effectively applying those theories in practice. Academia does not seem to be producing any useful tools for today’s managers; indeed, "Management research produced by academics does not fare particularly well in the marketplace of ideas that might be adopted by managers" (Pfeffer, 2007: 1336). Pfeffer goes on to cite the work of Mol & Bikenshaw (forthcoming) who suggest that of the 50 most important innovations in management, none of them originated in academia. He also cites Davenport & Prusak (2003) as noting that business schools "have not been very effective in the creation of useful business ideas" (emphasis theirs). Obviously, this does not bode well for the social sciences in general or business schools, in particular.

In short, the current paradigm of social sciences in the social sciences (in general) and management science (in particular) has not produced anything that managers can reliably apply for great effectiveness, let alone anything that might be considered revolutionary. Meanwhile, business students must wonder about the value of their tuition and managers must spend their lives without knowing what, if any, theory to apply for successful practice. This leads us to consider a challenging possibility: If we can identify a quantifiable link between management theory and paradigmatic revolution, we may be able to evaluate the usefulness of theory in terms of its potential for revolutionary implementation. That, in turn, would allow us to predict, and or instigate, a revolution in management theory.

A Working Hypothesis

An important part of a systemic perspective is to avoid looking at “things” because an improved understanding may be gained by looking at the relationships between them (Ashby, 2004; Harder, Robertson, & Woodward, 2004).The present chapter follows that suggestion by investigating the co-causal relationships between aspects within a theory in terms of that theory’s robustness. Instead of looking to our empirical bricks of data, we will focus on the theoretical mortar that binds them together. The present hypothesis suggests that revolution is enabled by the structure of the theory rather than some notion of objective data. To investigate this idea, I will revisit Kuhn’s work—investigating it from a metatheoretical perspective.

Within his descriptions of paradigm revolution, Kuhn provides several examples of revolutionary theorists and their fields of study. Among others, he mentions Coulomb (and his work in advancing electrical theory), Newton (mechanical motion), and Einstein (relativity). Each of those scientists developed the final theory—advancing his field to paradigmatic revolution. The theories developed by these exemplars of scientific revolution are similar in at least one important way. Each has a robustness of 1.0 (on a scale of zero to one). For example, Newton’s F=ma has three aspects; each one concatenated from the other two. Therefore the robustness of Newton’s formula is 1 (the result of three concatenated aspects divided by three total aspects).

Is it only coincidence that all these revolutionary theories have perfect robustness? If so, it seems an odd sort of combination, especially given the great differences between some areas of study. For example, who would imagine, a priori, that a theory involving electricity and a theory involving planets would have the same structure? Yet, the robustness of both theories is the same (Robustness = 1.0) as are many effective theories in physics. Such a relationship between structure and efficacy should not be too surprising because (as noted above) theories with a higher level of structure have long been expected to be more advanced. The problem, in physics and management, has been the lack of a standardized method for measuring the structure of a theory. Therefore, instead of seeking steadily higher levels of structure, management theorists were content to reach a level of logical structure deemed acceptable by the editorial/review process; there was no incentive to advance beyond that mark.

So, rather than dismiss this relationship out of hand, the following analysis suggests that there is some sort of connection between the structure of a theory and the usefulness of that theory in practice. Simply put, it is suggested that the robustness of a theory may be understood as a key indicator of a scientific revolution. The implication of this insight is very significant. If there is an objective approach to identifying revolutionary theory when it emerges, the development of that theory can be accelerated, thus enabling a revolution in management theory within decades instead of centuries, and, importantly, providing the attendant benefits to humanity and our understanding of our social world.

If the robustness of theory were, as hypothesized, a valid indicator of paradigmatic revolution, we would expect to see the development of a theory from low robustness to high robustness over some length of time. This expectation raises a question: How does the robustness of those theories change over time?

In choosing a body of theory to study, it should be stated here that no paradigmatic revolution has occurred in management theory. Perhaps this lack is why managers express frustration in their need for usable knowledge (Czarniawska, 2001) of the sort that would be provided by useful theories. Because of this lack, we cannot draw upon management theory for the present analysis. Therefore, we must investigate the history of some other body of theory. Because Kuhn used the example of electrical theory as part of a revolutionary paradigm, that area of theory appears to offer a reasonable area of study. And, as noted above, because the analysis is limited to the structure of the theory, the inferences drawn from EA (electrostatic attraction) theory may be transferred to the structure of management theory. Thus, for this analysis, I will investigate the evolution of EA theory and determine the level of robustness during different stages of the development of this theory. Mimicking Kuhn, for data I will draw on “The Development of the Concept of Electric Charge: Electricity from the Greeks to Coulomb” (Roller & Roller, 1954).

Analysis

In the present section, the method of propositional analysis will be applied to differing versions of the theory of electrostatic attraction as found in Roller & Roller, as that theory was understood at different points through history. The present history begins with a revolution in thinking that occurred when ancient Greeks began to explain, rather than simply describe, phenomena they encountered.

Roller & Roller present Plutarch as an example of thinking about this time. Following the example of Roller & Roller, this particular analysis includes magnetism, because, in ancient times, both magnetism and what we now understand as electrostatic attraction were believed to represent the same phenomena.

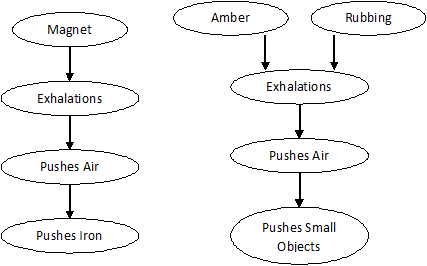

Around the year 100 CE, Plutarch wrote that lodestones (naturally occurring magnets) exhale, thus pushing the air, which would then push objects of iron. Amber behaved the same, except that amber needed rubbing to encourage it to exhale. The exhalations of amber would then push the air, which then would effect small objects (such as hair) instead of iron (Roller & Roller, 1954: 3).

Deconstructing Plutarch’s theory into its essential propositions, it may be said that rubbing (amber) creates exhalations; exhalations push air; and air pushes small objects. Magnets exhale, exhalations push air, air pushes iron. In this, it may be seen that there are seven aspects of the theory (Rubbing, Amber, Magnet, Air, Iron, Push, Small objects). Magnets are causal to Push, which is causal to the movement of Air and moving Air causes Push, which is causal to the movement of Iron. In this sequence, there are four aspects and each one is the result of only one other aspect. None of them are concatenated. Indeed, Exhalations are synonymous with Push, as both appear to be a general representation of some form of force.

Instead of concatenation, the relationship between these aspects may be understood as linear (Stinchcombe, 1987: 132). Where, for an abstract example, it may be said that A changes B, which changes C. In such a relationship, Stinchcombe notes, the concept of B is redundant. Therefore, it may be seen that Plutarch’s theory contained a redundant term by including the concept of air in the model. Redundant terms detract from the robustness of the model. Similarly, Occam’s razor suggests the need for parsimony in theory construction. In these older versions of the theory, the linear relationships between multiple aspects of the theory are examples of a lack of parsimony and therefore a weakness in the structure of the theory.

In contrast to linear relationships between some aspects of the model, Rubbing and Amber, together, cause Exhalations (Push); that Push is causal to the movement of Air, which is causal to the movement of Small objects. Here, the Push may be understood as a concatenated aspect of the theory because it is caused by a combination of Rubbing and Amber.

Figure 1 Plutarchean Electrostatic Attraction Theory

In determining the level of robustness of this version of theory by propositional analysis, it should be noted there are seven aspects—only one of which is concatenated. The others have simple, linear, causal relationships. Therefore, the robustness of Plutarch’s theory may be set at 0.14 (the result of one concatenated aspect divided by seven total aspects).

The next clear demarcation of theory surfaces in 1550. Jerome Cardan theorized that the rubbing of amber produced a liquid. And, that dry objects (such as chaff) would move toward the amber as they absorbed the liquid (Roller & Roller, 1954: 4-5). Here, there are six aspects (Rubbing, Amber, Liquid, Movement, Absorption, and Dry objects). And, as with Plutarch’s model, only one is concatenated (Rubbing and Amber together produce Liquid). Therefore, the robustness of Cardan’s theory is 0.17 (the result of one divided by six).

Although Cardan suggests a liquid instead of Plutarch’s exhalations of air, the theories are similar in their structure and level of robustness. The relationship between these theories stands as an example of how theories may appear to change because the terminology has changed. That change in terminology may be accompanied by changes in underlying assumptions, philosophical justifications, or even simple changes in speculation. Yet, looking at the theory through the metatheoretical lens of robustness, it becomes clear that no useful change has occurred at all.

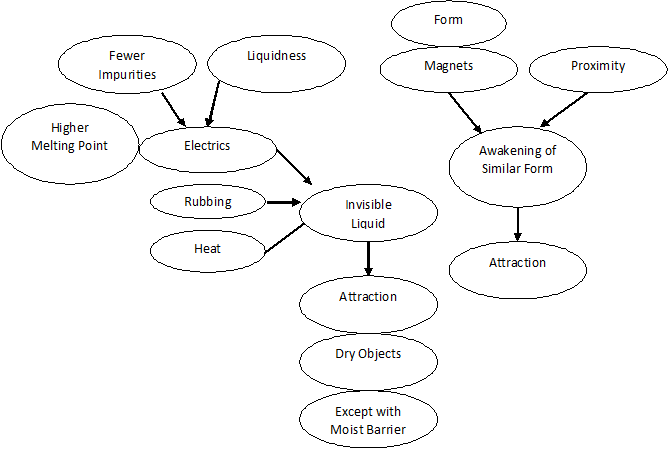

Roller & Roller note that the scientific revolution took hold about this time. More scientists began to investigate electrical phenomena. Those scientists began to develop new insights and a new vocabulary to relate the results of their experiments. About this time, every important experimenter had his own theory (Kuhn, 1970: 13). Continued experimentation led to the discovery that objects besides amber would exhibit what is now called electrostatic attraction when they were rubbed. About 1600, Gilbert called this class of objects, “electrics.”

According to Roller & Roller (1954: 10-11) Gilbert’s version of theory adds heat to the theory and suggests that rubbing and heat (but only heat from rubbing), and electrics, causes the release of an invisible liquid which then causes the attraction of dry objects. A moist barrier would interfere with that attraction. Additionally, Gilbert held that amber, glass, and gems all belonged to a class of material called “wet.” So, he concluded, that wet things were electric things. Yet, he found that wet things that softened or melted (such as wax and ice) would not attract. Similarly, electrics with impurities could not be rubbed to develop EA. Therefore, more aspects and more propositions were added to the theory suggesting that electrics were the result of “wet” things with higher melting points and lower levels of impurities.

Gilbert’s theory of magnetism suggested that each magnet possesses a “form” and that form would awaken a similar form in particles of iron that was near to the magnet. Indeed, he noted that the nearer the iron was to the magnet, the more mutual attraction would occur. Here, attraction may be understood as a synonym for movement.

In total, Gilbert’s theory included 14 aspects (Rubbing, Heat, Electrics (objects that exhibit EA), Impurities, Melting point, Attraction, Liquid, Dry objects, Moist barriers, Magnets, Form, Awakening, Iron, Proximity). Of these, Electrics may be said to be concatenated because they are formed with more Liquidness and fewer Impurities. Similarly, Attraction is concatenated because it is generated by more Electrics, more Rubbing, and more Heat. Additionally, it may be said that Magnets and greater Proximity results in more Awakening. Therefore, Gilbert’s version of electrical theory has a robustness of 0.21 (the result of three concatenated aspects divided by 14 total aspects).

Figure 2 Gilbertian version of Electrostatic Attraction Theory

In his time, Gilbert might have claimed that his own theory of attraction explained more than Plutarch’s theory. The moist barrier, for example, was not a part of Plutarch’s theory. Because Gilbert’s theory explains more than previous theories, today’s management theorist might be tempted to claim that it represents a scientific revolution. Yet, Kuhn does not suggest that a revolution occurred until much later. This example suggests how today’s general academic understanding of what constitutes a revolution is unclear, and how that lack of clarity opens the door for false claims of revolutionary advancement.

With continued experimentation, scientists generated more innovative terminology. By the mid 18th century, the “two-fluid theory” had emerged (Roller & Roller, 1954: 47-48); described as:

- There are two kinds of electric fluids (vitreous & resinous).

- Unelectrified objects contain equal amounts of the two fluids.

- Rubbing an object removes one of the two electric fluids.

- More imbalance results in greater strength of electrification.

- Touching objects will cause fluid to flow from one object to another, and so de-electrify the objects as the levels of electric fluid come into balance.

- Objects with similar fluids repel one another when they are near.

- Objects with differing fluids attract one another when they are near.

In this theory, there are eleven aspects (Vitreous fluid, Resinous fluid, Objects, Rubbing, Balance, Electrification, Repulsion of objects, Attraction of objects, Flow of fluid, Touching, and Nearness). The relationships leading to concatenated aspects may be summarized as:

- Rubbing and Objects decreases Balance thus increasing Electrification.

- Touching and Electrification and Objects cause Flow which then increases Balance and so decreases Electrification.

- Objects and Nearness and Balance cause Attraction.

- Objects and Nearness and lack of Balance cause Repulsion.

Note that many aspects are described in terms of linear relationships. For example, Flow increases Balance which decreases Electrification. It may be seen that the four aspects of Balance, Flow, Attraction, and Repulsion are concatenated by their relationship to the other aspects. Therefore, the robustness of this theory is 0.36 (the result of four concatenated aspects divided by eleven total aspects).

The existence of many theories, and many aspects, meant that more new theories were called for thus creating, “a synthesis that serves not only to reconcile the contradictory features, but to provide explanations of a wider range of phenomena” (Roller & Roller, 1954: 81).

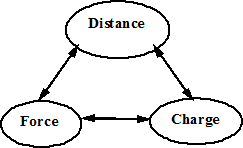

In what may be considered the final stage of development of this theory, from the perspective of Roller & Roller, Charles Coulomb developed his theory about 1785. Coulomb focused on only three aspects. Force, Electric charge, and Distance. He determined that the Force was equal to the Distance (squared) divided by the Charge. He also found that the Distance (squared) is equal to the Force multiplied by the Charge. And, the Charge is equal to the distance (squared) divided by the Force.

Therefore, it may be understood that each of the three aspects of Coulomb’s theory is concatenated from the other two. Therefore, the robustness of Coulomb’s theory is 1.0 (the result of three concatenated aspects divided by three total aspects). One benefit of developing a theory with a robustness of 1.0 was that mathematical techniques could now be used in conjunction with the experimental process. The result of this combination was revolutionary.

Figure 3 Coulomb’s Theory of Electrostatic Attraction

Discussion

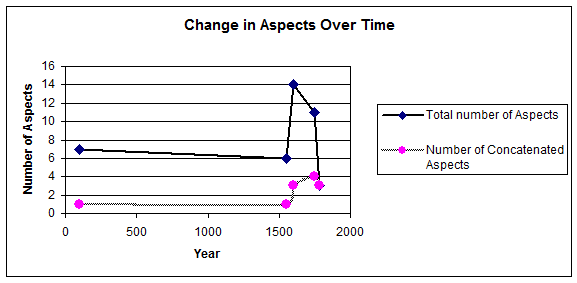

The above investigation used information from Roller & Roller (1954) to benchmark the development of EA theory at five points across hundreds of years. This objective analysis used the total number of aspects for each theory, and the concatenated aspects of those theories to identify the formal robustness of each of the five theories. That information is summarized in Table 1.

Table 1—Summary of Aspects and Robustness of Theories

| Year | Total Number of Aspects | Number of Concatenated Aspects | Robustness | Name of theorist or theory |

| 100 | 7 | 1 | 0.14 | Plutarch |

| 1550 | 6 | 1 | 0.17 | Cardan |

| 1600 | 14 | 3 | 0.21 | Gilbert |

| 1750 | 11 | 4 | 0.36 | Two Fluid theory |

| 1785 | 3 | 3 | 1.0 | Coulomb |

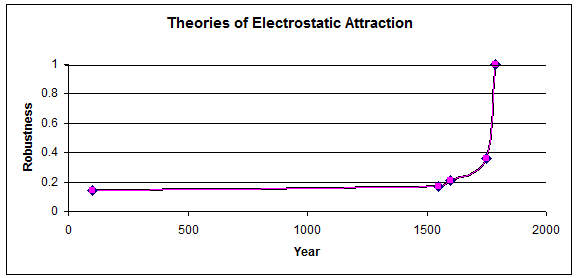

The graph in figure 4 shows the variation in the total number of aspects and the number of concatenated aspects over time. Note the pre-revolutionary surge during the scientific revolution. This period of increased experimentation led scientists to suggest more aspects and to identify more relationships between those aspects. This may be understood as the time when the “scientist in crisis” is generating “speculative theories” (Kuhn, 1970: 87). Those theories may lead to a “critical mass” of ideas (Geisler & Ritter, 2003) and generate communities of practice (Campbell, 1983: 127). This peak lends validity to the idea that theory which is more complex may be considered more advanced (Ross & Glock-Grueneich, 2008).

Also, note the change in focus over time. Early on, the body of theory included magnetics and electrostatics because both were thought to represent the same essential effect. As time and experimentation progressed, the field of study narrowed to the study of electrostatic attraction, only. Yet, despite the narrowed focus, the number of aspects that may be said to define theory continued to increase.

The same phenomenon appears to be occurring within the social sciences. In the field of management theory, the fragmentation of the field has been reported as problematic (Donaldson, 1995); yet, that fragmentation might also be understood as a narrowing of the focus—with each fragment representing a more focused sub-field.

Figure 4 Change in Aspects Over Time

In Figure 5, the robustness of theories is plotted over time. Note the rapid increase in robustness on the right hand of the chart. This rise corresponds with Kuhn’s description of scientific revolution culminating in paradigmatic change. The asymptotic change suggests an “event horizon” or a “phase shift” beyond which a theory may be considered a law. After this point in paradigm change, the focus would not be on developing new forms of theory, rather the focus would be on conducting empirical analysis to verify and/or falsify the theory. Once verified, the focus would shift to the application of theory in practice as a useful tool. This kind of change has not been found in management theory.

Figure 5 Robustness Over Time

The asymptote at the right-hand side of figure 5 is also suggestive of a “power curve,” a vertical (or nearly so) line that stands as a diagrammatic indicator that something significant has occurred, such as a quantum increase in the capacity of a system (Kauffman, 1995). In this case, the system under consideration is the structure of a theory. The theory at the top of the curve has significant capacity for enabling action, where theories at the bottom of the curve have very limited capacity. In short, theory with higher robustness has greater capacity to support paradigmatic revolution than theories of lower robustness.

The relationship between robustness and paradigmatic revolution suggests that the robustness of a theory may be used as a milestone for marking the objective development of a theory and progress toward revolution. Similarly, the robustness of a theory may also be considered something of a predictor for such a revolution.

In addition to the relatively passive approach of tracking changes in theories developed by others, a more activist approach may also be inferred. That is to say, if a theorist works toward the purposeful creation of robust theory, she may be able to purposefully spark a paradigmatic revolution. With that opportunity, there are also limitations. For example, we should probably disregard a speculative theory that seems to represent a robust relationship if it has no basis in reality.

To conclude this section, the advancement toward robustness appears to be a useful indicator and potential instigator of paradigmatic revolution. The test of robustness might be understood as a validation of theory in the Popperian sense, although it is validation with considerably more rigor than Popper appears to have considered in his time. While validation is useful and necessary, there still remains the need to falsify those theories, as suggested by Popper (2002). In the following two sub-sections, I will investigate the implications of advancing management theory toward robustness. The first will relate more to academicians, who might be more interested in advancing management theory. The second will relate more to practitioners, who might be more interested in the application of robust theory.

Whither Management Theory?

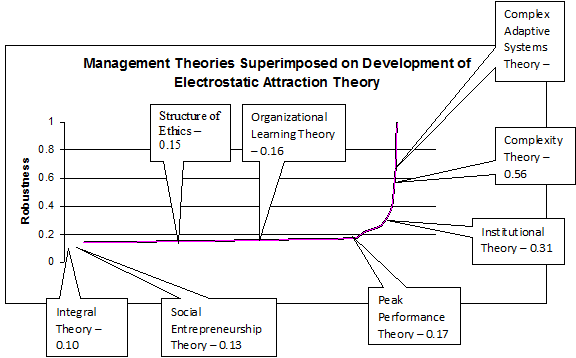

To date, no comprehensive test of robustness has been conducted of the field of management theory. However, some indicator of the field may be inferred from tests of robustness performed on bodies of theory from related sub-fields. In figure 6, the robustness of each of eight theories are indicated on the curve from figure 5—the development of EA theory. By showing those theories in relation to the time required to develop EA theory to robustness, we may infer how much time might be required for each body of theory to reach a useful level of robustness. This is, of course, working under the assumption that the current paradigm of management science might follow the same trajectory as the EA theory—a question that is very much open for consideration.

Briefly, those eight studies are related to management theory as follows. Social entrepreneurship theory is part of the general rubric of management theory for the simple reason that management is sometimes understood in terms of an entrepreneurial activity. A study of social entrepreneurship theory found a robustness of 0.13 (Wallis, 2009d). Integral theory has been applied to a wide range of disciplines in the social sciences (including management). A study of integral theory found a robustness of 0.10 (Wallis, 2008d). A study of a structure of ethics found a robustness of 0.15 (Wallis, 2009f). Organizational learning theory, has been found to have a robustness of 0.16 (Wallis, 2009c). Peak performance theory has a robustness of 0.17 (Wallis, 2009e). Institutional theory is a little better with a robustness of 0.31 (Wallis, 2009b). Higher levels of robustness are found in studies related to systems theory. A study of complexity theory, as it has been applied to organizations, finds a robustness of 0.56 (Wallis, 2009a). And, a study of Complex Adaptive Systems (CAS) theory as it relates to management and organizations finds a robustness of 0.63 (Wallis, 2008b).

Figure 6 Robustness of Some Management Theories

It should be noted that the areas of theory with the highest levels of robustness are those that are more closely related to systems theory (i.e., complexity theory and CAS theory). Therefore, it may be suggested that future studies of management should utilize approaches based in systems theory, cybernetics, and complexity theory. More studies of robustness are required in and of the field of management theory to confirm this idea.

From figure 6, we may begin to answer the question, “How long until management theory experiences a true paradigmatic revolution?” Most theories of management noted here have a robustness at or below Cardan’s theory of EA (Robustness = 0.17). When Cardan presented his theory in 1550, Coulomb’s revolutionary theory was a distant 235 years away. When the two-fluid theory of electricity emerged in 1750 (R = 0.36), Coulomb’s discovery was a relatively modest 35 years in the future. Some management theories today, specifically in CAS theory and complexity theory, have levels of robustness that exceed the two fluid theory, suggesting those theories might achieve revolutionary status much sooner. Accelerating management theories toward robustness (and related revolution in improved efficacy) is important because of the depth and breadth of problems faced by practitioners.

Linking Theory and Practice

Managers, consultants, CEOs, and all kinds of practitioners might read this chapter and ask, “What’s in it for me?” In brief, then, this study suggests that theories of higher robustness are expected to be more effective in application. Further, we may now purposefully advance a theory toward robustness and so enable a paradigmatic revolution. With a paradigm revolution, improvements in theory are associated with highly effective improvements in practice.

To encapsulate an example from history, the low robust theories of EA were associated with explaining mere curiosities, such as the way that hair is attracted to amber because of exhalations. In management practice, this is like a performance management guru claiming that a stitch in time saves nine. Perhaps it is true in some metaphorical sense, but making it work in practice is entirely in the hands of the employee. Worse, there is no way to tell if these kinds of claims are actually true. This means that managers are likely relying on false information. In short, managers might be better off with no theory at all.

The EA theories of medium robustness, developed during the scientific revolution, were associated with public displays of new scientific insights—creating controlled shocks and showing how electrostatics might work under varying conditions; interesting, but of uncertain value. In management, this might be conceptually similar to conflict resolution or a facilitated organizational change process. There are successes and failures. The successes are widely touted while the failures are quietly ignored. Either way, the credit or blame might be applied to the consultant, the theory, both, or some other factor (such as a sudden change in the economy).

At the end of the scientific revolution, when the understanding of EA reached a robustness of one, it had become a very useful theory. A recent search of the US patent database revealed over 7,000 patents that draw upon the principle of EA. In management terms, using a highly robust theory would be like having a way to accurately predict the behavior of your employees, the actions of the market, or the best strategic plan to follow. Obviously, we have none of those—yet.

As management theory advances toward paradigmatic revolution, we can expect to see the same kinds of evolution in practical application. Early theories will explain curiosities while later theories may be used for effective application. What will the future look like when we have robust theories of management? We can no more predict such a future than Plutarch could predict the invention of cell phones or laptop computers. Rather than attempting to predict an incomprehensible future, this section will serve to suggest what practitioners might do in the near term to improve their use of theory and to support more effective practice.

As we know too well, the emergence of a new management guru is accompanied by the arrival of thick hardback books gracing the bookstore shelves, their covers proclaiming some “new way” to work. Their pages are packed with wisdom-filled anecdotes and careful explanations detailing exactly why the reader should adopt this new point of view. Essentially, each new book represents a new theory, a new lens that provides the thoughtful reader with a new way to see the world. Along with that new view comes the implication and/or description of new actions, policies, and behaviors the practitioner should enact to achieve success. However, implementing a new system is a difficult and expensive process. Because, as the limited success of TQM and other methods have shown, past benefits are no indicator of future success. The difficulty here is that practitioners really have no certain way of determining if that guru’s approach is likely to be successful, or not. The present chapter provides some remedy, suggesting that practitioners should choose the theory with the highest level of robustness (avoiding choosing theories based only on their popularity), because, as suggested above, theories that are more robust have more capacity to support more effective action.

Because robust theories are not immediately available, a more thoughtful approach is called for. It is important that the practitioner not be a “consumer” of theory—following the faddish dictates of each emerging guru. Rather, the practitioner must become something of a researcher, possibly working in parallel with academicians and in thoughtful interaction with management texts.

An important idea here is that practitioners need to measure what is occurring in the workplace (we have no choice in this, we do it automatically) while at the same time, our sense of measurement (what we measure and how we measure it) must change. We must learn to understand the invisible things, such as morale (Dubin, 1978; van Eijnatten, van Galen, & Fitzgerald, 2003). In the development of the physical sciences, Kuhn suggests that the emergence of a new paradigm with its robust theory is accompanied by the development of new instruments for measurement (Kuhn, 1970: 28). However, in the study of management, no instruments currently exist for a CEO to measure (for example) the morale of her corporation. Indeed, the only instruments available to practitioners are the practitioners themselves. This “self as instrument” (McCormick & White, 2000) includes the self-identification of phenomenological reactions. An important consideration here is how to calibrate ourselves as an instrument for effective measurement.

Bateson (1979) suggests that the process of calibration is enhanced by the use of “double description” where multiple streams of information are combined to suggest a new, third form of information that is more useful than the previous two. Other examples of double description include binocular vision (where the extra sense of depth is added), and synaptic summation (where neuron A and neuron B must both fire to trigger neuron C). In example after example, Bateson shows how these double descriptions create an extra dimension of understanding. Importantly, this is an understanding that is of a different (and higher) logical type.

A parallel may be drawn between the structure of Bateson’s approach and the structure of theory. As described above, the idea of a concatenated aspect of theory may be found in a proposition describing how aspect A and aspect B combine to understand aspect C. This understanding of aspect C through the understanding of aspects A and B suggests a greater level of understanding—a higher logical type. The parallel between the idea of double description and the idea of concatenated theory suggests that applying the two ideas in parallel might provide more benefits than either one of them alone. In short, this similarity suggests the practitioner might improve the process of self-calibration using robust theory as a guide. The validation of this idea will require additional studies.

Limitations and Opportunities for Future Research

This study is limited because it examines the development of theory in only a single field—electrostatic attraction. Future studies of this type might investigate other forms of theory. For example, studies might be conducted on the evolving robustness of theories of motion, thermodynamics, and relativity. Such studies will provide additional insight into the advancement of management theory.

The present study is also limited because it presents the insights of a single researcher. Future studies may investigate the validity of the present methodology by engaging multiple researchers in parallel studies of the same body of data. It is anticipated that, procedural errors aside, the results of multiple researchers will be similar to those presented here. This kind of study would add greatly to the validity of the metatheoretical conversation and so support the advancement of theory in a useful direction.

Future research involving the testing or development of theory should include propositional analysis as an objective test for the formal robustness of theory. That way, scholars and practitioners will have a method of effectively evaluating the theory and its potential for advancement, calibration, and application. This “R” level should be indicated in the abstract of each publication.

Future studies might also investigate the robustness of management theories over time and investigate the link between robust theory and calibration. These studies will be critical to supporting paradigmatic revolutions in management. More generally, managerial scholars may best be served by abandoning (at least temporarily) the tight focus on empirical research. Rather than employ methods based on empirical perception and so-called facts, scholarship should instead focus on metatheoretical efforts to identify robust relationships between concatenated aspects of theory. Only after we have developed highly robust theories will it make sense to engage in empirical research.

Of course, such an approach is more easily said than done. As with the development of electrical theory, we might expect many arguments to emerge around the specific meaning of each aspect of the theory. However, as long as we keep in mind that each aspect should be understood only in terms of two or more other aspects of the theory, we will have a compass indicating the direction toward success in the field of management theory.

Conclusion

Although management science does not have theories that are highly effective in practice, we do have a boom in theory creation. In some sense, the increase in theory creation parallels the global information boom. While the business world is well acquainted with the difficulties (and opportunities) of that vast amount of information (Wytenburg, 2001), we academicians can readily retreat to our disciplinary niches (or create new ones), and so insulate ourselves from information overload. Instead of retreating, I suggest that we advance; and, in so doing, I suggest that we recalibrate our views of the world.

When we look at the vast number of management theories, we should not see a fragmented and chaotic field. Instead, from a metatheoretical vantage point, we should see a field that is rich in resources from which we can build more robust theories. Rather than focus on empirical data from direct observation, we should use existing theories as data to investigate and advance management theory using rigorous metatheoretical methodologies. Given that we have the pursuit of robustness as a viable direction for positive and objective advancement and that we have a huge field of theory to draw from, *my hope is that we can advance effective theories into practice on the order of years, rather than centuries. *

In the present chapter, I used propositional analysis (a metatheoretical methodology founded on principles of systems theory) to objectively investigate the development of theory over time in terms of its formal robustness. In this, I expanded our understanding of paradigmatic revolutions by developing a more detailed understanding of the role played by the structure of theory in advancing a science toward revolution. Specifically, by explicating how the achievement of fully robust theory appears to be an integral aspect of paradigmatic revolution. Importantly, with this new understanding of formal robustness, we have the opportunity to measure, and purposefully advance management theory toward paradigmatic revolution and improved efficacy.

What does it take to be an Einstein? What does it take to be a stonemason who can take a pile of bricks and turn it into a well-built house? What does it take to create a revolutionary theory that, in turn, radically alters the fabric of management life? The study presented in this chapter suggests how scholars and practitioners in the field of management may anticipate great professional success by developing and applying management theory of appropriate robustness. Without purposeful advancement, management theory can expect to remain moribund for decades or centuries—with dire implications for practitioners and management programs in universities.

Bibliography

Amsden, R. T., Ferratt, T. W., & Amsden, D. M. (1996). TQM: Core paradigm changes. Business Horizons, 39(6), 6-14.

Appelbaum, R. P. (1970). Theories of Social Change. Chicago: Markham.

Argyris, C. (2005). Double-loop learning in organizations: A theory of action perspective. In K. G. Smith & M. A. Hitt (eds.), Great minds in management: The process of theory development (pp. 261-279). New York: Oxford University Press.

Ashby, W. R. (2004). Principles of the self-organizing system. [Classical Papers]. Emergence: Complexity and Organization, 6(1-2), 103-126.

Austin, J., Stevenson, H., & Wei-Skillern, J. (2006). Social and commercial entrepreneurship: Same, different, or both? Entrepreneurship Theory and Practice, 30(1), 1-22.

Bartlett, J. (1992). Familiar Quotations: A Collection of Passages, Phrases, and Proverbs Traced to their Sources in Ancient and Modern Literature (16 ed.). Toronto: Little, Brown.

Bateson, G. (1979). Mind in nature: A necessary unity. New York: Dutton.

Bennet, A., & Bennet, D. (2004). Organizational Survival in the New World: The Intelligent Complex Adaptive System. Burlington, Massachusetts: Elsevier.

Bernier, L., & Hafsi, T. (2007). The changing nature of public entrepreneurship. Public Administration Review, 67(3), 488-503.

Boudon, R. (1986). Theories of Social Change (J. C. Whitehouse, Trans.). Cambridge, UK: Polity Press.

Burrell, G. (1997). Pandemonium: Towards a Retro-Organizational Theory. Thousand Oaks, California: Sage.

Campbell, D. T. (1983). Science's social system and the problems of the social sciences. In D. W. Fiske & R. A. Shweder (eds.), Metatheory in social science: Pluralism and subjectivities (pp. 108-135). Chicago: University of Chicago Press.

Clarke, T., & Clegg, S. (2000). Management Paradigms for the New Millennium. International Journal of Management reviews, 2(1), 45-64.

Czarniawska, B. (2001). Is it possible to be a constructionist consultant? Management Learning, 32(2), 353-266.

Daneke, G., A. (1999). Systemic Choices: Nonlinear Dynamics and Practical Management. Ann Arbor: The University of Michigan Press.

de Leeuw, E. (1999). Healthy cities: Urban social entrepreneurship for health. Health Promotion International, 14(3), 261-269.

Dekkers. (2008). Adapting organizations: The instance of Business process re-engineering. Systems Research and Behavioral Science, 25(1), 45-66.

Dolan, C. (2007). Feasibility Study: The Evaluation and Benchmarking of Humanities Research in Europe: Arts and Humanities research Council.

Donaldson, L. (1995). American Anti-Management Theories of Organization: A Critique of Paradigm Proliferation. New York: Cambridge University Press.

Dubin, R. (1978). Theory building (Revised ed.). New York: The Free Press.

Edwards, M., & Volkmann, R. (2008). Integral theory into integral action: Part 8. Retrieved 11/03/08, 2008, link.

Fiske, D. W., & Shweder, R. A. (Eds.). (1986). Metatheory in social science: Pluralisms and subjectivities. Chicago: University of Chicago Press.

Fowler, A. (2000). NGDOS as a moment in history: Beyond aid to social entrepreneurship or civic innovation? Third World Quarterly, 21(4), 637-654.

Geisler, E., & Ritter, B. (2003). Differences in additive complexity between biological evolution and the progress of human knowledge. Emergence, 5(2), 42-55.

Ghoshal, S. (2005). Bad management theories are destroying good management practices. Academy of Management Learning & Education, 4(1), 75-91.

Glaser, B. G. (2002). Conceptualization: On theory and theorizing using grounded theory. International Journal of Qualitative Methods, 1(2), 23-38.

GMU. (2009). John Nelson Warfield. Faculty Expertise Database, 2009, link.

Guo, K. L. (2007). The entrepreneurial health care manager: Managing innovation and change. The Business Review, Cambridge, 7(2), 175-178.

Hammond, D. (2003). The Science of Synthesis: Exploring the Social Implications of General Systems Theory. Boulder, Colorado: University Press.

Harder, J., Robertson, P. J., & Woodward, H. (2004). The spirit of the new workplace: Breathing life into organizations. Organization Development Journal, 22(2), 79-103.

Kaplan, A. (1964). The conduct of inquiry: Methodology for behavioral science. San Francisco: Chandler Publishing Company.

Kauffman, S. (1995). At Home in the Universe: The Search for Laws of Self-Organization and Complexity (Paperback ed.). New York: Oxford University Press.

Kuhn, T. (1970). The Structure of Scientific Revolutions (2 ed.). Chicago: The University of Chicago Press.

Lachmann, R. (Ed.). (1991). The Encyclopedic Dictionary of Sociology (4 ed.): The Dushkin Publishing Group.

Lewis, M. W., & Grimes, A. J. (1999). Meta-triangulation: Building theory from multiple paradigms. Academy of Management Review, 24(4), 627-690.

Maanen, J. V., Sørensen, J. B., & Mitchell, T. R. (2007). The interplay between theory and method. [Introduction to special topic forum]. Academy of management review, 32(4), 1145-1154.

MacIntosh, R., & MacLean, D. (1999). Conditioned emergence: A dissipative structures approach to transformation. Strategic Management Journal, 20(4), 297-316.

McCormick, D. W., & White, J. (2000). Using One's Self as an Instrument for Organizational Diagnosis. Organization Development Journal, 18(3), 49-63.

McKelvey, B. (2002). Model-centered organization science epistemology. In J. A. C. Baum (Ed.), Blackwell's Companion to Organizations (pp. 752-780). Thousand Oaks, California: Sage.

Meehl, P. E. (2002). Cliometric metatheory: II. Criteria scientists use in theory appraisal and why it is rational to do so. [Monograph Supplement]. Psychological Reports, 91(2), 339-404.

Mintzberg, H. (2005). Developing theory about the development of theory. In K. G. Smith & M. A. Hitt (eds.), Great minds in management: The process of theory development (pp. 355-372). New York: Oxford University Press.

Morgan, G. (1996). Images of Organizations: Sage.

Mort, G. S., Weerawardena, J., & Carnegie, K. (2003). Social entrepreneurship: Towards conceptualisation. International Journal of Nonprofit and Voluntary Sector Marketing, 8(1), 76-88.

Nickles, T. (2009). Scientific Revolutions. Stanford Encyclopedia of Philosophy Retrieved 04/23/2009, 2009, from http://plato.stanford.edu/entries/scientific-revolutions/

Nonaka, I. (2005). Managing organizational knowledge: Theoretical and methodological foundations. In K. G. Smith & M. A. Hitt (eds.), Great Minds in Management: The Process of Theory Development (pp. 373-393). New York: Oxford University Press.

Olson, E. E., & Eoyang, G. H. (2001). Facilitating Organizational Change: Lessons From Complexity Science. San Francisco: Jossey-Bass/Pfeiffer.

Pfeffer, J. (2007). A modest proposal: How we might change the process and product of managerial research. Academy of Management Journal, 50(6), 1334-1345.

Popper, K. (2002). The logic of scientific discovery (K. Popper, J. Freed & L. Freed, Trans.). New York: Routledge Classics.

Ritzer, G. (2001). Explorations in social theory: From metatheorizing to rationalization. London: Sage.

Roller, D., & Roller, D. H. D. (1954). The Development of the Concept of Electric Charge: Electricity from the Greeks to Coulomb (Vol. 8). Cambridge, Mass: Harvard University Press.

Ross, S. N., & Glock-Grueneich, N. (2008). Growing the field: The institutional, theoretical, and conceptual maturation of “public participation,” part 3: Theoretical maturation. [Editorial]. International Journal of Public Participation, 2(1), 14-25.

Schön, D. A. (1991). The Reflective Turn: Case Studies In and On Educational Practice. New York: Teachers Press.

Senge, P., Kleiner, K., Roberts, S., Ross, R. B., & Smith, B. J. (1994). The Fifth Discipline Fieldbook: Strategies and Tools for Building a Learning Organization. New York: Currency Doubleday.

Shareef, R. (2007). Want better business theories? Maybe Karl Popper has the answer. Academy of Management Learning & Education, 6(2), 272-280.

Sheard, S. (2007). Devourer of our convictions: Populist and academic organizational theory and the scope and significant of the metaphor of 'revolution'. Management and Organizational history, 2(2), 135-152.

Shotter, J. (2004). Dialogical Dynamics: Inside the Moment of Speaking. Retrieved June 81, 2004, 2004, from http://pubpages.unh.edu/~jds/thibault1.htm

Skinner, Q. (1985). Introduction. In Q. Skinner (Ed.), The return of grand theory in the human sciences (pp. 1-20). New York: Cambridge University Press.

Smith, K. G., & Hitt, M. A. (eds.) (2005). Great Minds in Management: The Process of Theory Development: Oxford University Press.

Smith, M. E. (2003). Changing an organization's culture: Correlates of success and failure. Leadership & Organization Development Journal, 24(5), 249-261.

Southerland, J. W. (1975). Systems: Analysis, administration, and archetectura: Van Nostrand.

Stacey, R. D., Griffin, D., & Shaw, P. (2000). Complexity and Management: Fad or Radical Challenge to Systems Thinking. New York: Routledge.

Stapleton, I., & Murphy, C. (2003). Revisiting the Nature of Information Systems: The Urgent Need for a Crisis in IS Theoretical Discourse. Transactions of International Information Systems, 1(4).

Starbuck, W. H. (2003). Shouldn't organization theory emerge from adolescence? Organization, 10(3), 439-452.

Steier, F. (1991). Research and Reflexivity. London: Sage Publications.

Steingard, D. S. (2005). Spiritually-Informed Management Theory: Toward Profound Possibilities for Inquiry and Transformation. [Essay]. Journal of Management Inquiry, 14(3), 227-241.

Stinchcombe, A. L. (1987). Constructing social theories. Chicago: University of Chicago Press.

Sutton, R. I., & Staw, B. M. (1995). What theory is not. [ASQ Forum]. Administrative Science Quarterly, 40(3), 371-384.

Van de Ven, A. H. (2007). Engaged scholarship: A guide for organizational and social research. New York: Oxford University Press.

van Eijnatten, F. M., van Galen, M. C., & Fitzgerald, L. A. (2003). Learning dialogically: The art of chaos-informed transformation. The Learning Organization, 10(6), 361-367.

Wallis, S. E. (2006a, July 13, 2006). A sideways look at systems: Identifying sub-systemic dimensions as a technique for avoiding an hierarchical perspective. Paper presented at the International Society for the Systems Sciences, Rohnert Park, California.

Wallis, S. E. (2006b). A Study of Complex Adaptive Systems as Defined by Organizational Scholar-Practitioners. Unpublished Metatheoretical Dissertation, Fielding Graduate University, Santa Barbara.