Is There a Complexity Beyond the Reach of Strategy?

Max Boisot

ESADE, ESP

Introduction

A quick overview of the development of strategy over the past three decades suggests that it has been getting steadily more complex (Stacey, 1993; Garratt, 1987). This is both a subjective and an objective phenomenon. Objectively speaking, causal empiricism points to a world that is increasingly interconnected and in which the pace of technological change has been accelerating. The arrival of the internet is evidence of increasing connectivity—some managers find upward of 200 emails waiting for them each morning when they arrive at the office. The persistence and replication of Moore's Law are evidence of accelerating technical change. The spirit of Moore's Law—which stated that the speed of computer chips would double every 18 months and that their costs would halve in the same period—has now spread out beyond the microprocessors and memory chips to which it was first applied (Gilder, 1989) and has started to invade a growing number of industries (Kelly, 1998). As a result, corporate and business strategists are today expected to deal with ever more variables and ever more elusive, nonlinear interaction between the variables. What is worse, in a regime of “time-based competition,” they are expected to do so faster than ever before. This often amounts to a formidable increase in the objective complexity of a firm's strategic agenda.

Complexity as a subjectively experienced phenomenon has also been on the increase among senior managers responsible for strategy. While lower-level employees are working shorter hours, senior managers are working longer hours. Having to deal with a larger and more varied number of players, they travel more. They meet each other for breakfast, for lunch, and for dinner. And in New York, busy managers now balkanize their lunch, with the first course being devoted to one meeting in one restaurant, the second course being reserved for a second meeting in a second restaurant, and so on. They come out of their meals with more things to think about and with less time to think about them in. Can such growing complexity be tamed by some intelligible ordering principle of the firm's own devising, i.e., is it what mathematicians refer to as “algorithmically compressible” (Chaitin,1974; Kolgomorov, 1965)? Or does it simply have to be endured and dealt with on its own terms? In other words, can complexity be reduced or must it be absorbed? Adapting a certain number of simple concepts drawn from both computational and complexity theory, and applying them within a conceptual framework that deals with information flows (Boisot, 1995, 1998), this is the issue addressed in this article.

The claims of neoclassical economic theory to the contrary notwithstanding, we have come to realize that human economic agents are boundedly rational creatures. There is a limit to the complexity that they can handle over a given time period (Simon, 1957). Organizations are devices for economizing on bounded rationality. They create routines for the purpose of reducing the volume of data-processing activities with which they have to deal (March and Simon, 1958). Routines, in a sense, embody working hypotheses concerning both the way that selected portions of the world function and how they can be mastered. Routines, therefore, carry a strong cognitive component that reflects individual or collective sense making and understanding (Weick, 1995).

Nelson and Winter, writing in an evolutionary vein, see such routines as units of selection (Nelson and Winter, 1982). Firms that fail to evolve new and adapted routines in response to changing circumstances sooner or later get selected out—they are penalized if they fail to revise their working hypotheses in a timely manner in the face of disconfirming evidence. Obviously, timeliness is a relative concept, and some environments will be more munificent with respect to the availability of time than others. Yet it is equally obvious that the faster and the more extensively circumstances change, the less time will be available for adaptation to take place and the more likely it is that any given firm will be selected out, to be replaced by new, better-adapted competitors. In such a case, a failure of learning and adaptation at the level of the individual firm is compensated for by learning at the level of a population of firms.

But are cognitive strategies that aim at sense making and the creation of new routines the only option open to firms for coping with the boundedness of rationality when confronted with complexity and change? Is understanding a prerequisite for effective adaptation? In answering these questions, it is worth recalling the relationship that has been posited between task or task environment and organization (Woodward, 1965; Lawrence and Lorsch, 1967). Simply put, the evidence is that task shapes organization structure. The relationship had originally been established at the level of individual organization units within a firm, but it is in effect a fractal one—that is to say, self-similar at different levels of analysis (Mandelbrot, 1982). It operates wherever we find agency, action, and structure working together. Narrowly construed and embedded deep within the firm, tasks are operational, e.g., assembling a vehicle, writing a marketing report, etc. At the broadest and highest level, however, tasks become strategic so that strategy shapes structure (Chandler, 1962), and aims either to align the firm as a whole with the requirements of its environment or to shape the environment so as to render it hospitable to the firm and its possibilities (Weick, 1979).

We can now phrase the issue before us as follows: Do increases in the complexity of a firm's strategic task of themselves call for changes in the way that the strategy process is organized within the firm? And should these changes be primarily cognitive, i.e., should they aim to accelerate and facilitate the sense-making process among senior managers so that these can initiate the creation of new and better-adapted routines?

The fit between task and organization turns out to be one variant of Ross Ashby's (1954) Law of Requisite Variety (LRV). Adaptive learning requires that the range and variety of stimuli that impinge on a system from its environment be in some way reflected in the range and variety of the system's repertoire of responses. For variety read complexity—or at least one variant of it (see below). Thus, another way of stating Ross Ashby's law is to say that the complexity of a system must be adequate to the complexity of the environment in which it finds itself.

Note that we do not necessarily require an exact match between the complexity of the environment and the complexity of the system. After all, the complexity of the environment might turn out to be either irrelevant to the survival of the system or amenable to important simplifications. Here, the distinction between complexity as subjectively experienced and complexity as objectively given is useful. For it is only where complexity is in fact refractory to cognitive efforts at interpretation and structuring that it will resist simplification and have to be dealt with on its own terms. In short, only where complexity and variety cannot be meaningfully reduced do they have to be absorbed.

So an interesting way of reformulating the issue that we shall be dealing with in this article is to ask whether the increase in complexity that confronts firms today has not, in effect, become irreducible or “algorithmically incompressible”? And if it has, what are the implications for the way that firms strategize?

In tackling these two questions, we shall take strategic thinking to be a socially distributed data-processing activity involving a limited number of agents within a population of agents that make up a firm. Strategic thinking involves the sharing of diverse yet partially overlapping representations between agents, with a firm's strategy being an emergent outcome of the way that such representations are shared (Eden and Ackermann, 1998). The structuring and sharing of knowledge between agents lie at the heart of the approach that we propose to adopt.

A CONCEPTUAL FRAMEWORK: THE I-SPACE

Organizations are data-processing and data-sharing entities. They are made up of agents who successfully coordinate their actions by structuring and sharing information both with insiders—i.e., in hierarchies—and with outsiders—i.e., in markets (Williamson, 1975). Because agents are often subject to information overload, however, they are generally concerned to minimize both the amount of data they need to process and the amount they need to transmit in any time period (March and Simon, 1958; Boisot, 1998). For this reason, organizational agents, when acting purposefully and under some constraint of time and resources, exhibit a general preference for data that already has a high degree of structure and is therefore easy to transmit.

But how does data get processed into meaningful structures in the first place? Data processing has two dimensions: codification and abstraction. Codification can be thought of as the creation of categories to which phenomena can be assigned, together with rules of assignment. Wellcodified categories are clear categories and well-codified assignment rules are clear rules. Thus the less the amount of data processing required to assign a phenomenon to a category, the faster and the less problematic the assignment will be. We then say that both the phenomenon and the category to which it is assigned are well codified. Uncodified categories and rules of assignment, by contrast, are characterized by fuzziness and ambiguity. Assigning phenomena to categories will then be slow and costly in terms of data processing. Where no assignment can be made at all, the amount of data processing required to perform an act of categorization may well go to infinity.

If codification is about minimizing the amount of data processing required to assign phenomena to categories, abstraction establishes the minimum number of categories required to make such assignments meaningful. Where few categories are required, the more abstract our treatment of the phenomenon can be and the larger become the data processing economies on offer. By contrast, the larger the number of categories required to perform a meaningful assignment, the closer we are to the concrete realities of the natural world. At the extreme, when no abstraction is possible, the number of potential categories available to us runs to infinity and we find ourselves dealing with concrete data in its full complexity.

Codification and abstraction are cognitive strategies that any intelligent agent deploys in order to economize on data-processing costs. The two strategies mutually reinforce each other and help the agent make sense of its world by giving it a meaningful structure. They form two of the three dimensions of our conceptual framework. The sharing of data between agents is captured by a third dimension that describes datadiffusion processes. We can think of diffusion as the percentage of dataprocessing agents within a given population of these that can be reached by an item of data per unit of time. Agents may, but need not, be human. A population of firms, for example, could be located along the diffusion dimension, in which case one might well be dealing with an industry. Or, more fancifully perhaps, the population of agents could be neurons. All that is required for the purposes of I-Space analysis is that agents be capable of receiving, processing, and transmitting data. The agents that are to be located on the diffusion scale, however, have to chosen with care to avoid mixing apples with oranges. Firms, for example, cannot jostle with individuals on the scale without undermining the analysis. A second issue is that agents have to be placed there for a reason. That is, they must share some interest with respect to the data that flows in the I-Space.

The structuring and sharing of data are related. The more one can codify and abstract the data of experience, the more rapidly and extensively it can be transmitted to a given population of agents. The relationship is indicated by the curve of Figure 1. At point A on the curve one is in the world of Zen Buddhism, a world in which knowledge is highly personal and hard to articulate. It must be transmitted by example rather than by prescription. But examples are often ambiguous and open to different interpretations. Zen knowledge, therefore, can only be effectively

Figure 1. The diffusion curve in the I-Space

shared on a face-to-face basis with trusted disciples over extended periods of time (Suzuki, 1956).

Point B on the curve, by contrast, describes the world of bond traders. This is a world where all knowledge relevant to trading has been codified and abstracted into prices and quantities. This knowledge, in contrast to that held by Zen masters, can diffuse from screen to screen instantaneously and on a global scale. Face-to-face relationships and interpersonal trust are not necessary. Only the technical and legal systems that support transactions need to be trusted, not transacting agents themselves.

Our Zen Buddhists and bond traders are, of course, caricatures. In the real world, some Zen masters trade in bonds and some bond traders practice Zen meditation. What our example is intended to highlight is how different the information environments that confront agents can be, as they go about their business. The fact that certain agents will be exposed to a greater variety of information environments than others does not fundamentally alter the picture.

TRANSACTIONAL STRATEGIES IN THE I-SPACE

The possibilities available to agents for structuring and sharing data, then, create different information environments. Think, for example, of what happens when information is readily structured—and hence diffusible— but its actual diffusion is under some kind of central control. It is then often only made available to agents on a “need-to-know” basis. In such an information environment, the possession of well-structured knowledge will be treated as a source of organizational power over others and thus carefully hoarded. At the other extreme, we can think of situations in which knowledge is freely available to agents but in fact only diffuses in a limited way—and this by interpersonal means—on account of its being relatively uncodified and concrete. Knowledge will then become the property of small groups of agents whose size is limited by the possibilities of entertaining trust-based face-to-face relationships.

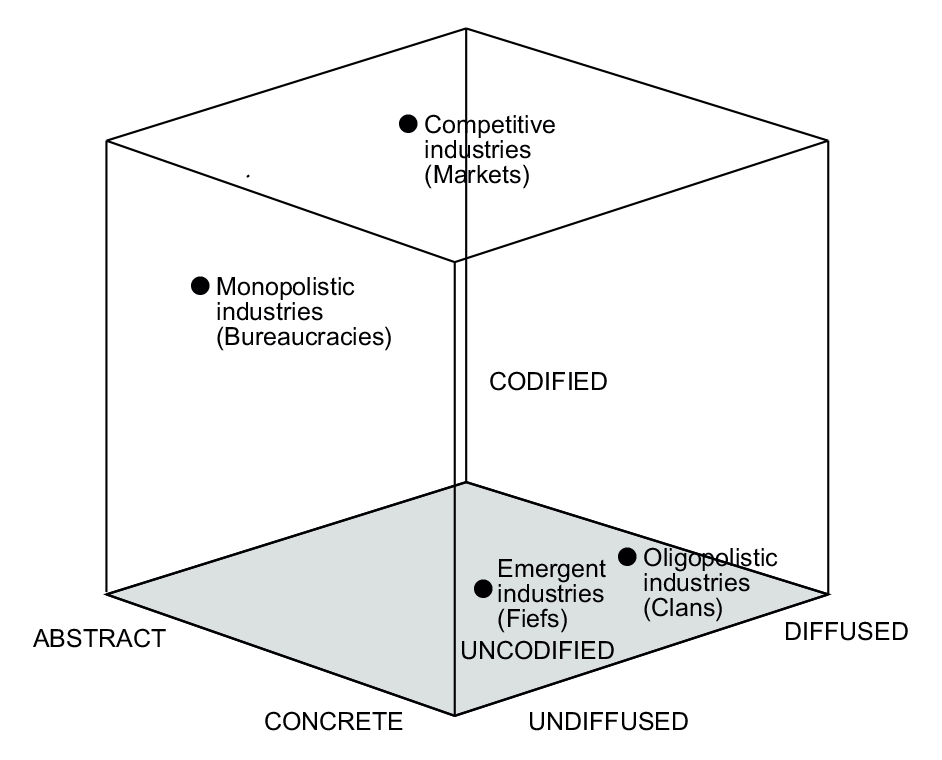

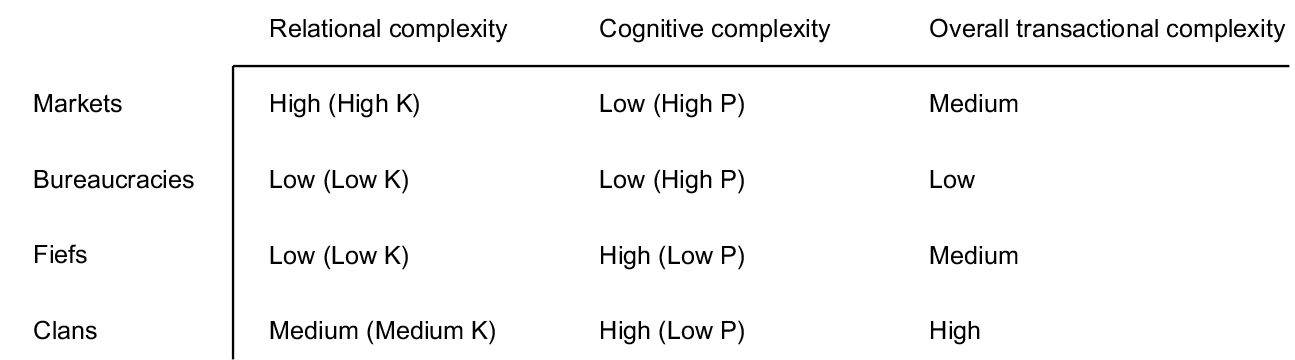

Differences in the possibilities for structuring and sharing data can bring forth distinctive cultural practices and institutional arrangements. We identify four of these in the I-Space (Figure 2) and outline their essential characteristics in Table 1. The features distinguishing such institutional arrangements from each other are:

- the extent to which exchange relationships need to be personalized and the degree of interpersonal trust that they require;

- the extent to which data is asymmetrically held and hence constitutes a source of either personal or formal power;

- the degree to which specific types of exchange are recurrent and hence allow for emergent processes to operate.

Trust requires some ability by agents to get on to the same wavelength and implies some sharing of values. Power relationships require acquiescence. In this way, and drawing on Giddens's Structuration Theory (Giddens, 1984), we move beyond purely cognitive issues of signification to address problems of legitimation and dominance (Boisot, 1995).

Figure 2. Institutions in the I-Space

Table 1. Institutions in the I-Space

The institutional structures located in the different regions of the I-Space lower the costs of processing and sharing data and hence of transacting in those regions. They can be thought of as a set of emergent Nash equilibria in iterated games between varying numbers of agents, equilibria that are partly shaped by the characteristics of the information environment in which the games take place. In effect, then, agents face two options when seeking data processing and transmission economies:

- Where data is amenable to codification and abstraction, move out along the codification and abstraction dimensions.

- Where it is not, foster the emergence of institutional structures appropriate to the information environment in which they find themselves.

These structures, as Nash equilibria, then act as what mathematicians call attractors in the I-Space, pulling in and shaping any transactions located in their neighborhood or “basin of attraction.”

The institutional structures depicted in Figure 2 can work individually or in combination. And as we have already indicated, they can also be adapted to the needs of different types of data-processing agents. Figure 3, for example, locates a population of organizational employees along the diffusion dimension of the I-Space, i.e., it represents a firm. The diagram also assigns some of the key functions of the firm respectively to those regions of the I-Space that best describe their information environments. Where such an assignment is valid—and whether it is or not is ultimately an empirical matter that will depend on firm and industry characteristics—we

Figure 3. Some firm-level functions in the I-Space

would expect such functions to exhibit the cultural traits predicted respectively for each of these regions. The firm itself, therefore, would accommodate a variety of institutional cultures that then need to be integrated. Where one of these cultures predominates—i.e., acts as a strong attractor—at the expense of the others, dysfunctional behaviors are likely to appear. Thus, for example, a strong sales department driven by welldefined customer needs in a competitive environment operates within a timeframe that could undermine the more long-term and “blue skies” approach of an R&D department, should this be unable to defend its organizational interests.

Figure 4 treats the firm itself as a data-processing agent in its own right and depicts a population of firms in an industry. Here, the I-Space allows us to explore industry-level structures and cultures. We see from the diagram that monopolistic and oligopolistic industries may have quite distinct cultures, and that these, in turn, are likely to differ significantly from industries characterized as either competitive or emergent.

COMPLEXITY IN THE I-SPACE

The issue we are addressing is whether the growing complexity that the firm confronts remains accessible to strategic processes. We therefore now ask: Do any of the concepts coming out of the new sciences of complexity have anything to contribute to strategic thinking, and do they lend themselves to treatment in the I-Space?

Figure 4. Industry structures in the I-Space

The first point to note is that some of the measures of complexity that have been put forward find echoes in our codification and abstraction dimensions. Gregory Chaitin (1974) and Andrei Kolgomorov (1965), for example, have each separately developed the concept of Algorithmic Information Content (AIC). AIC is measured by the shortest program that will describe a phenomenon such that it can be faithfully reproduced; our own definition of codification is the minimum number of bits of information that will allow us adequately to describe a phenomenon. Murray Gell-Man has pointed out, however, that such “crude” complexity, as defined by AIC, is indistinguishable from randomness (Gell-Man, 1994). He proposes a measure of what he terms “effective complexity” to complement AIC, which he defines as the shortest program that will describe the regularities that characterize a phenomenon; our own definition of abstraction is the minimum number of categories that will allow us adequately to capture a phenomenon. Clearly, if we adopt and adapt the definition offered by Chaitin, Kolmogorov, and Gell-Man, what we mean by information structuring can now be interpreted as an instance of algorithmic compressibility, a reduction in data-processing complexity. Equally clearly, the carrying out of such a reduction is a cognitive process.

To deal with the diffusion dimension of the I-Space, we must turn to the work of Stuart Kauffman (1993, 1995). Kauffman has been investigating the process of self-organization from a theoretical biologist's perspective. His random Boolean networks—he calls them NK networks— consist of nodes and linkages that switch on and off in a binary fashion, where N stands for the number of nodes in the network and K measures the density of connections between the nodes. Again, with some adaptation, NK networks allow us to examine the emergence of complex interactions in a population of agents. All we require is that the nodes exhibit some minimal data-processing capacity and that the linkages be treated as communication channels between nodes. Treating each node as an agent, we can then establish measures of data-processing complexity for each one. With increasing data-processing complexity, Kauffman's model comes to look increasingly either like a neural net—where nodes can extend their communicative reach beyond their immediate neighbors (Aleksander and Morton, 1993)—or like a cellular automaton—where they cannot (Wolfram, 1994).

Following Kauffman, we shall let N represent the number of agents in our target population—N thus corresponds to the length of our diffusion dimension—and K the degree of agent interconnectedness. Thus an agent with a high K enjoys extensive interactions with other agents, whereas one with a low K may be feeling pretty lonely. Kauffman then offers us a tuning parameter P—developed by two of his colleagues, Bernard Derrida and Gerard Weisbuch of the Ecole Normale Supérieure in Paris—to represents any switching bias present in the network; that is, the probability that the link between any two nodes will be activated. Where P has the value of 0.5, for example, no switching bias is present. Linkages between nodes are equally likely to be activated and to stay dormant so that the network behaves chaotically. As P approaches the value of 1, however, the network behaves in an increasingly orderly fashion, until at 1 it reaches a frozen or steady state, either fully “on” or fully “off.”

Kauffman's P bears a striking resemblance to Shannon's H, his measure of entropy or information in a channel (Shannon and Weaver, 1949). In Shannon's scheme, H reached its maximum value when symbols in a sequence were equally likely to follow each other. Where the symbol sequence exhibited bias, this could be exploited by a suitable coding scheme to reduce the length of the sequence, i.e., it could be structured and its complexity reduced. We shall use P as a rough measure of dataprocessing complexity, with a low value of P (at or close to 0.5) corresponding to low levels of codification and abstraction, and a high value of P (at or close to 1) corresponding to high levels of codification and abstraction. Clearly, in our interpretation of P, we are once more combining GellMann's crude and effective complexity in a single measure. The I-Space itself, however—like Gell-Man—keeps the two concepts distinct.

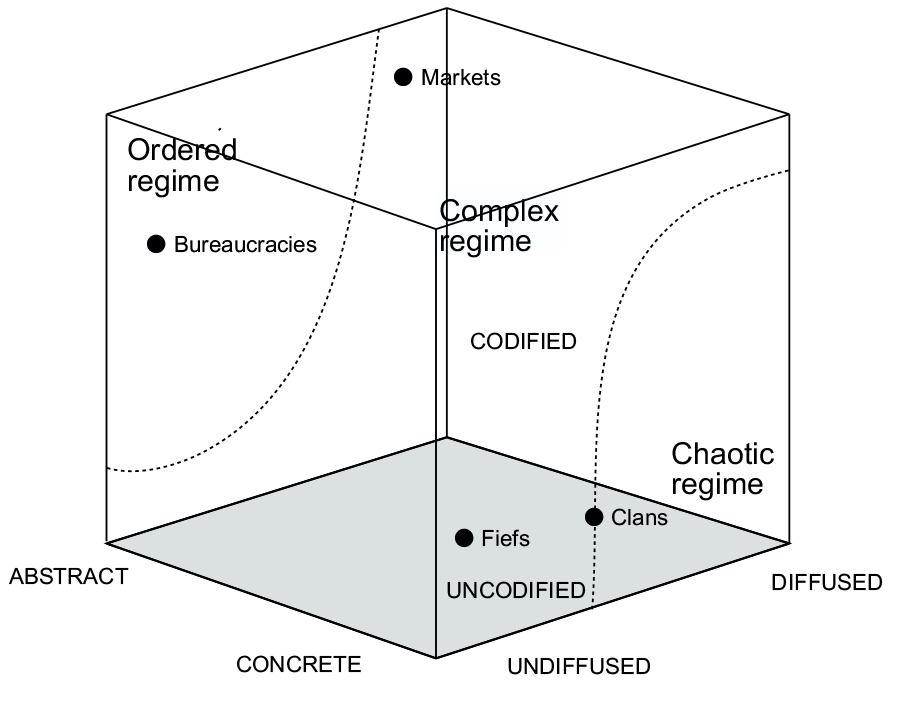

By varying K and N and appropriately tuning P, Kauffman establishes phase transitions between ordered, complex, and chaotic regimes in random Boolean networks. In a similar fashion, by tuning P and varying K for a given N—in our own analysis, to keep things simple, we shall hold the number of agents located along the diffusion dimension constant, even though in real life agents are constantly coming and going along it— we can create phase transitions in the I-Space that reflect ordered, complex, and chaotic social processes. Thus, for example, where the value of P is high—i.e., close to the value 1—and the value of K is low—i.e., the density of interaction among agents is low—we are in an ordered regime where things are stable and predictable. Where, by contrast, the value of P is close to 0.5 and the value of K is high, we find ourselves in a chaotic regime where nothing is stable and valid predictions are hard to come by. In between these values for P and K, we operate in a complex regime exhibiting varying degrees of stability and, hence, predictability.

What are we in fact doing? Nothing more than varying either the amount of data processing that agents are required to carry out or the density of social interaction in which they are expected to engage. Although we are not yet in a position to present empirical results for this exercise—a research project is just getting under way at the Wharton Business School to test out the idea—we can offer an indication of what kinds of hypotheses might be tested by it.

COMPLEXITY REDUCTION (ANALYSIS) VERSUS COMPLEXITY ABSORPTION (EMERGENCE)

The term “culture” has been defined in many ways (Kroeber and Kluckhohn, 1952), but nearly all of them involve the structuring and sharing of data within or across groups. How effectively it is done is a function of the volume of data that is to be shared, the size of the group or groups with which it has to be shared, and the density of social interaction within or between such groups. Figure 2 locates institutional structures in the I-Space as a function of these three variables, and the way in turn that such structures combine in the real world impart to a given culture a unique configuration or “signature” in the Space. In effect, the location and nature of institutional structures in the I-Space reflect both the complexity of the data-processing environment in which they find themselves as well as that of the social interactions to which they give rise. Data-processing activities and social interaction thus place these structures in a phase space as indicated in Figure 5, and according to the

Figure 5. Institutions in phase spaces

criteria outlined in Table 2. As we can see from the figure, bureaucracies clearly sit in the ordered regime, whereas markets and fiefs occupy the complex regime. Note, however, that the complexity of markets is due to the number of agents that need to be coordinated, whereas that of fiefs is attributable to the fuzziness of the information environment. Thus, whereas markets operate with a P value closer to 1—i.e., with prices that codify and diffuse all the relevant information—fiefs operate with a P value closer to 0.5. How close is an empirical question that cannot be addressed here. Clans, although also characterized by complexity, seem to be located close to the chaotic regime—with low values for P and medium values for K, they sit on the “edge of chaos” (Langton, 1992).

Over time, cultural and organizational evolution moves us from one set of institutional arrangements to another (North, 1990). As we move, we shall sometimes experience phase transitions reflecting the extra expenditures of cognitive and social energy required both to overcome

Table 2. The complexity of transactional structures

the attractive forces of a given institutional arrangement acting as a Nash equilibrium, and to adapt to a new institutional regime. Whether it is worth moving or not depends on how far the benefits of doing so counterbalance the costs incurred in doing so. The benefits are measured in savings on energy expenditures, i.e., economies achieved either in the processing of data or in the coordination of agent interaction. The costs are the converse of the benefits: energy expended in learning how to process data in a new region of the I-Space and to coordinate new kinds of interactions between agents. We find ourselves, in effect, confronting the same kind of choices as those identified in the literature on transaction cost economics (Coase, 1937; Williamson, 1975, 1985; Eggertsson, 1990), except that, given our broader treatment of data processing and cognitive issues, our options extend beyond those of markets and hierarchies tout court (Boisot, 1986).

This is just as well. For we still have to cope with the effects of entropy in the I-Space, the tendency for data-processing activities and interactions between agents to lose their structure and become increasingly disordered over time. As might be imagined, the rate of entropy production is at its minimum in the ordered regime and at its maximum in the chaotic regime. We know from the second law of thermodynamics that in a closed system, entropy can never decrease. In the I-Space, we can effectively attempt to “close” the system by holding N, the number of agents, constant. That is to say, we can try to limit the entry and exit of agents into the I-Space by controlling access to the diffusion scale.

If we succeed, entropy will then increase in the system in two distinct ways. First, data, is always undergoing diffusion in the Space and hence tending to move transactions toward the right—toward the complexity of markets in the upper regions of the Space, and toward the chaos that lies beyond clans in its lower regions. Second, data that has been highly structured by moves along the codification and abstraction dimensions, becomes subject to the action of time, i.e., to institutional forgetting. Although with structured data the loss of institutional memory will operate more slowly than in the case of unstructured data, over time, unless maintained by further expenditures of energy, the structures created to preserve data gradually erode, thus pulling data-processing activities back into the lower regions of the I-Space, where they become uncodified and concrete.

We can think of our institutional structures as emergent mechanisms that have the effect of minimizing the rate of entropy production in the type of information environment in which they find themselves. They capture and stabilize transactions, temporarily blocking—or at least slowing down—their movement either downward or toward the right in the I-Space. In the absence of such structures, all transactions sooner or later drift into the chaotic regime and, unless they are “open” to new inputs of energy and information—usually provided by new agents entering the I-Space—organizations disintegrate in a Hobbesian “war of all against all.”

Generally speaking, wherever they can do so, we see entropyminimizing firms seeking out the ordered regime, one in which the value of P is high and the value of K is low. Firms prefer stability to instability and will simplify and routinize wherever they can. When is that? Whenever they have enough understanding of the tasks they face to reduce their data-processing load, as well as enough power to manage directly the coordination of agent interactions. Firms, then, pace Tom Peters (Peters, 1992), do not thrive on chaos if they can possibly help it. Some degree of chaos may be a precondition for creativity and renewal, but chaos is also destructive of identity (Schumpeter, 1934) and firms, like most of us, typically prefer what already exists (us) over what could exist (others). Under most circumstances, therefore, they shun the chaotic regime in theI-Space—one that is unsustainably high in energy expenditures—and, more often than not, they also seek to escape from the complex regime into the stability and security of the ordered regime, of simple and predictable routines, and of uncomplicated, hierarchical relationships. In short, wherever possible, firms will economize on transaction costs by opting for bureaucracies in the I-Space, an institutional form that offers stability and order to firms experiencing their first significant growth (Boisot and Child, 1988, 1996).

Yet what happens when the cognitive understanding required to move up the I-Space into bureaucracies is absent? Or when the power to coordinate agent interaction—a move to the left in the I-Space—is lacking? Is a gradual drift into the chaotic region of the Space the only option?

We argue that a firm has available two quite distinctive strategies for countering the action of entropy in the I-space. Assuming that it is not yet in the chaotic regime and hence disintegrating as an organized entity, it can either seek to reduce whatever complexity it confronts through cognitive and relational strategies that will move it toward the ordered regime, i.e., by increasing the value of P and decreasing the value of K or of N or of both. Or it can seek to absorb such complexity by first allowing some drift toward the right and then settling down in a location that stops short of the chaotic regime, a strategy that requires the firm to invest in institutional and cultural arrangements appropriate to that location. Given that they lie outside the ordered regime, markets, fiefs, and clans must be considered as much complexity-absorbing institutions as they are complexity-reducing ones.

One feature that distinguishes bureaucracies from these other institutional forms is the tight degree of coupling between agents. Fiefs, markets, and clans are all characterized by varying degrees of loose coupling between agents. Bureaucracies are bound into rigid hierarchical structures by well-structured roles and routines and a well-defined and accepted set of unitary goals. Fiefs also exhibit hierarchy, but the cement that binds agents together is much weaker: personal loyalty, and to transient agents, not to institutionalized roles. Markets bring agents together in well-structured and legally enforceable transactions, but typically, when we are dealing with markets that are “efficient” (Roberts, 1987), these are “spot” exchanges or at least time-limited ones. Only labormarket relationships are more durable, but then, once contracted, these take the transacting parties out of the market and often place them in bureaucracies. Outside the employment relationship, market players remain atomized, each free to pursue their own interests through a sequence of spot market transactions. Coupling is thus well structured but highly transient and episodic.

Finally, clans are flexible structures that work through personal negotiation and mutual adjustment. Participants in clan transactions share the gain and the pain. Here the binding of agents to each other is achieved through mutual trust. Personal trust is necessary precisely because the nature of the coupling is so uncertain and contingent and because, in contrast to markets, legal enforcement mechanisms are so weak. The looser the coupling between agents, the larger the degrees of freedom they enjoy in what they think and how they behave, and the greater the variety that they can draw on when dealing with increasingly complex tasks. Loose coupling between agents is more difficult to manage than tight coupling. But loose coupling, by increasing requisite variety, allows the firm to manage (i.e., absorb) irreducible complexity over a wider range of states than tight coupling.

The decision by a firm to absorb rather than reduce complexity can be interpreted as a decision to develop a cultural and institutional capacity in the fief, market, and clan regions of the I-Space. The firm can then either develop that capacity internally—in which case it faces the challenge of managing the resulting complexity within its own corporate boundaries by fostering a corporate culture appropriate to the operational needs of fiefs, markets, or clans taken singly or in combination—or it can develop it through a judicious choice of the kinds of organizations with which it collaborates. It must then manage the complexity taking place at the interorganizational interface through transactional arrangements appropriate to the institutional needs of fiefs, markets, and clans.

Sometimes, the main challenge facing firms pursuing complexityabsorption strategies is to manage the tensions that result when they find themselves in an institutional environment that requires the location of interface-management arrangements in one part of the I-Space while their corporate culture is located in another. Such tensions often surface in strategic alliances, joint ventures, or operations in foreign countries whose cultural and institutional structures differ radically from those found at home (Boisot and Child, 1999).

IMPLICATIONS FOR STRATEGIC PROCESSES

Chandler has traced the evolution of the giant US corporations in the last decades of the nineteenth century (Chandler, 1977) and shown how the adoption of well-articulated functional structures allowed them to manage their growth. He also studied how, following continued growth, such firms were later led to decentralize their operations by creating divisional structures in the first decades of the twentieth century (Chandler, 1962). Both the moves to the functional structure and then to the divisional structure were a response to the pressures of information overload. In the I-Space, the moves corresponded to a trajectory first up the Space toward bureaucracies, where tasks could be structured and assigned to functions, and then horizontally along the Space toward markets, where tasks could be decentralized toward divisions that were made to compete with each other for critical resources such as capital, labor, and managerial talent. The strategy, then, was first to reduce complexity through the creation of articulate structures, and secondly, as it kept on growing, to absorb it through a process of decentralization that reduced the intensity and extent of organizational coupling required between players. Both moves, taken together, however, amounted to a cultural commitment to the upper regions of the I-Space.

The strategy remained serviceable until the 1980s. Firms grew, and also grew richer. But with the globalization of markets and the acceleration of technological competition, the complexity with which firms had to deal kept on increasing. Today, we may be reaching the limit of what the upper regions of the I-Space have to offer in terms of either complexity reduction or absorption. Both the culture of command and control that characterizes bureaucracies, and that of market-driven SBUs held to well-structured short-term performance objectives, entail a long-term loss of entrepreneurship and a consequent inability to handle fuzziness and uncertainty.

Many firms have sensed this intuitively and have started experimenting with clan-like organizational forms such as networks (Nohria and Eccles, 1992). They have therefore started building cultural and institutional capacity once more in the lower regions of the I-space. In those regions, they encounter regimes that go from the moderately complex (fiefs) to the complex (clans) to the chaotic (no institutionalization possible). A fief culture is typically that of the small firm, the family business, or the start-up. Loyalty to an idea or to an individual predominates. As numbers grow, however, and interactions between agents become more extensive—with the rapid growth of the internet, for example, N and K have both been getting bigger—either the personal power that characterizes this culture needs to be formalized in a move up the I-Space toward bureaucracies, or a decentralization toward clan forms of governance needs to take place. We have characterized clans as an edge-ofchaos phenomenon. If, as we have argued, firms cannot thrive on chaos, might they do so on the edge of chaos?

Mintzberg and Waters have distinguished between deliberate and emergent strategies. They suggested that strategy walks on two legs (Mintzberg and Waters, 1985), one oriented toward analysis and plans, the other toward intuition and responsiveness to the unexpected. If they are right, then strategy has a need for a variety of distinct cultures inside the firm, some to handle the predictable and the routinizable—the deliberate—others to handle the uncertain and the complex—the emergent. In short, if one accepts the Mintzberg and Waters model of the strategy process, then, in an extension of the Chandlerian thesis, if structure follows strategy, the appropriate cultures must be also developed to manage the structure as it grows in diversity and complexity.

Take, for example, managing in clans, on the edge of chaos. This requires an ability to handle much higher levels of uncertainty and anxiety than analytically trained executives are used to. Clans are typically volatile and unstable forms of social organization (Macinnes, 1996). They tend to generate more social entropy than do well-structured bureaucracies. In an unforgiving selection environment, the extra organizational energy that they burn up has to be compensated for by higher levels of creativity and innovation. Yet it is the very need for greater entrepreneurship and innovation—brought about by hypercompetition (D'Aveni, 1995), by globalization, and by accelerating technical change— that is dragging many firms into the lower regions of the I-space in the first place. Unfortunately, they often bring with them an administrative heritage (Bartlett and Ghoshal, 1989) that is ill suited to the challenge that they face; namely, to foster a culture capable of absorbing complexity as well as reducing it.

Thus in so far as the business environment is becoming more complex, firms will need to shift from the complexity-reducing strategies that secured their success from the end of the nineteenth until the end of the twentieth century and place more stress on complexity-absorbing ones— a shift away from bureaucracies and toward fiefs, markets, and clans in the I-Space. Much of the popular management literature has picked this up. It stresses internal competition (markets), the need for the large firm to behave like a small one (fiefs), and the importance of interpersonal networking (clans). Yet without an appropriate theoretical perspective on what is happening to firms, the insights emanating from this literature will remain underpowered. As we have indicated in this article, the burgeoning sciences of complexity can help put this right.

REFERENCES

Aleksander, I. and Morton, H. (1993) Neurons and Symbols: the Stuff that Mind Is Made of, London: Chapman and Hall.

Bartlett, C. and Ghoshal, S. (1989) Managing Across Borders: The Transnational Solution, Cambridge, MA: Harvard Business School Press.

Boisot, M. (1986) “Markets and hierarchies in cultural perspective,” Organization Studies, 7 (2): 135-58.

Boisot, M. (1995) Information Space: A Framework for Learning in Organizations Institutions and Cultures, London: Routledge.

Boisot, M. (1998) Knowledge Assets: Securing Competitive Advantage in the Information Economy, Oxford: Oxford University Press.

Boisot, M. and Child, J. (1988) “The iron law of fiefs: bureaucratic failure and the problem of governance in the Chinese economic reforms,” Administrative Science Quarterly, 33: 507-27.

Boisot, M. and Child, J. (1996) “From fiefs to clans: explaining China'ss emergent economic order,” Administrative Science Quarterly, 41: 600-28.

Boisot, M. and Child, J. (1999) “Organizations as adaptive systems in complex environments: the case of China,” Organization Science, 10 (3, May-June): 237-52.

Chaitin, G. J. (1974) “Information-theoretic computational complexity,” IEEE Transactions, Information Theory, 20 (10).

Chandler, A. D. (1962) Strategy and Structure: Chapters in the History of the American Industrial Enterprise, Cambridge, MA: Free Press.

Chandler A. D. (1977) The Visible Hand: the Managerial Revolution in American Business, Cambridge, MA: The Belknap Press of Harvard University Press.

Coase, R. (1937) “The nature of the firm,” Economica, NS 4: 386-405.

D'Aveni, R. A. with Gunther, R. (1995) Hypercompetitive Rivalries, New York, NY: Free Press.

Eden, C. and Ackermann, F. (1998) Making Strategy: The Journey of Strategy Management, London: Sage.

Eggertsson, T. (1990) Economic Behaviour and Institutions, Cambridge: Cambridge University Press.

Garratt, R. (1987) The Learning Organization, London: Fontana.

Gell-Man, M. (1994) The Quark and the Jaguar, London: Abacus.

Giddens, A. (1984) The Constitution of Society: Outline of the Theory of Structuration, Cambridge: Polity Press.

Gilder, G. (1989) The Quantum Revolution in Economics and Technology, New York, NY: Simon and Schuster.

Kauffman, S. (1993) The Origins of Order, Oxford: Oxford University Press.

Kauffman, S. (1995) At Home in the Universe, London: Viking.

Kelly, K. (1998) New Rules for the New Economy, London: Viking.

Kolmogorov, A. N. (1965) “Three approaches to the quantitative definition of information,” Problems in Information Transmissions, 1: 3-11.

Kroeber A. and Kluckhohn, C. (1952) Culture: a Critical Review of Concepts and Definitions, Papers of the Peabody Museum of American Archeology and Ethnology, Vol. 47, Cambridge, MA: Harvard University Press.

Langton, C. (1992) Artificial Life, Reading, MA: Addison-Wesley.

Lawrence, P., and Lorsch, J. (1967) Organization and Environment: Managing Differentiation and Integration, Homewood, IL: Richard Irwin.

Macinnes, A. (1996) Clanship and Commerce and the House of Stuart, 1603-1788, East Linton: Tuckwell Press.

Mandelbrot, B. (1982) The Fractal Geometry of Nature, San Francisco, CA: Freeman.

March, J. and Simon, H. A. (1958) Organizations, New York: John Wiley.

Mintzberg, H. and Waters, J. “Of strategies, deliberate and emergent,” Strategic Management Journal, July-September.

Nelson, R. and Winter, S. (1982) An Evolutionary Theory of Economic Change, Cambridge, MA: The Belknap Press of Harvard University Press.

Nohria, N. and Eccles, R. (1992) Networks and Organizations: Structure, Form, and Action, Boston, MA: Harvard Business School Press.

North, D. (1990) Institutions, Institutional Change and Economic Performance, Cambridge: Cambridge University Press.

Peters, T. (1992) Liberation Management, New York: Alfred A. Knopf.

Roberts, J. (1987) “Perfectly and imperfectly competitive markets,” in J. Eatwell *et al. *(eds) Allocation, Information and Markets, London: Macmillan, 231-40.

Ross Ashby, W. (1954) An Introduction to Cybernetics, London: Methuen.

Schumpeter, J. A. (1961) The Theory of Economic Development: an Inquiry into Profits, Capital, Credit, Interest and the Business Cycle, London: Oxford University Press.

Shannon, C.E. and Weaver, W. (1949) The Mathematical Theory of Communication, Urbana, IL: University of Illinois Press.

Simon, H. A. (1957) Administrative Behaviour: A Study of Decision-Making Processes in Administrative Organization, New York: Free Press.

Stacey, R. (1993) “Strategy as order emerging out of chaos,” Long Range Planning, 26 (1): 10-17.

Suzuki, D. (1956) Zen Buddhism, New York: Doubleday Anchor Books.

Weick, K. (1979) The Social Psychology of Organizing, Reading, MA: Addison-Wesley.

Weick, K. (1995) Sensemaking in Organizations, Thousands Oaks, CA : Sage.

Williamson, O. E. (1975) Markets and Hierarchies: Analysis and Antitrust Implications, New York: Free Press.

Wolfram, J. (1994) Cellular Automata and Complexity, Reading, MA: Addison-Wesley.

Woodward, J. (1965) Industrial Organization: Theory and Practice, Oxford: Oxford University Press.